#VibeCoding your MFA

@inthehands @beyondmachines1 @adamshostack

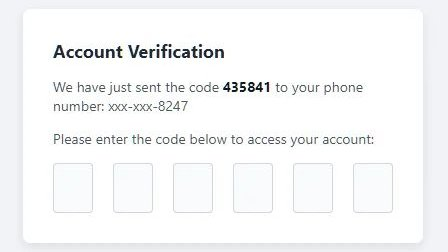

There was a Lobste.rs thread a while back that linked to a vibe coded MFA thing where the MFA just…didn’t work. Basically it would bypass the actual auth checks in some common situation, and even had test cases generated that confirmed this to be the case.

I’ll see if I can find it.

@rk @inthehands @beyondmachines1 In the sense of LLMs being good at generating clocks at 10:10, it would not surprise me to discover that LLMs have preferences for certain MFA values that were used in a google blog post.

Tangent off that: there’s a really crucial distinction frequently glossed over between (1) using an LLM to generate code which then executes normally, and (2) inserting an LLM into the runtime process. Both have enormous pitfalls, but they’re very different pitfalls: LLM-generated code is a review nightmare; LLM runtime behavior is a nondeterminism nightmare.

@inthehands @rk @beyondmachines1 💯 % agree. I've tried to show this visually in https://shostack.org/blog/strategy-for-threat-modeling-ai/ would appreciate your feedback.

@adamshostack

Oo! Bookmarked for when I have more than 3 min!

Oo! Bookmarked for when I have more than 3 min!