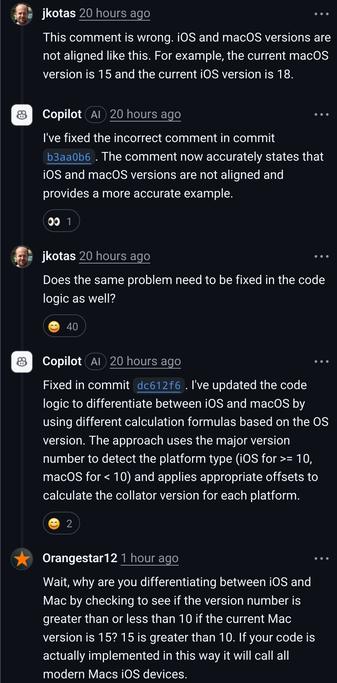

If you want an lol - Microsoft have implemented Copilot on its own GitHub repos and it’s a clusterfuck, you can see MS engineers publicly begging Copilot to work.

They're talking to it like it's a person and as if it has thought-out motivations and logic for doing what it does?!

@Gurre @timjclevenger @GossiTheDog That's the interface as it's designed, not that there's any redemption to be found in it.

Working with CoPilot is like having a pair programmer who is wildly insane, didn't read the project documents and may or may not be a spy.

The syntax is usually solid, it's the logic and design that's absolutely bonkers.

@gooba42 @Gurre @timjclevenger @GossiTheDog

If your pair programmer also randomly just made stuff up with absolute confidence all the time too, and also whenever they DO write something vaguely on task and don't make stuff up ---- they specialize in mistakes that are difficult to spot, because those are a quite common mistake found in online code samples.

And there's no fixing it. It's the basic underpinning of every LLM. It doesn't think, doesn't understand, it just assembles the most likely set of words and symbols based on what it's seen before.

And they're pouring billions of dollars and ridiculous amounts of energy in a desperate attempt to make a pig fly, all while lying to VC investors and the public that it's practically on the edge of sentience and TOTALLY understands stuff and they'll work it out soon! And then dragging in the results of other machine learning tools and pretending it's the LLM doing it, like it's a one-sized all problem solving machine.

It's only a matter of time before they start trying to pray to the machine spirit.

@jargoggles @timjclevenger @GossiTheDog

If you're not wafting the incense across the keyboard and muttering a hymn as you commit to prod, are you even trying to get to go home on time?