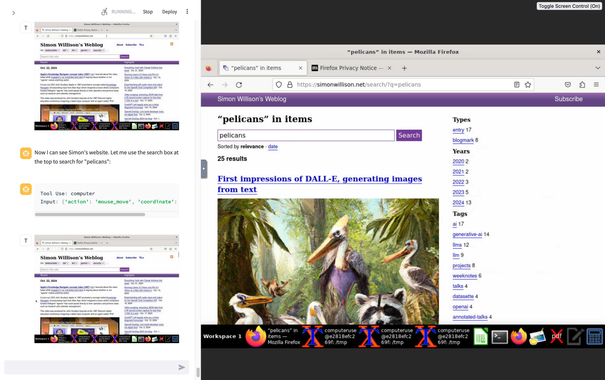

Anthropic released a fascinating new capability today called "Computer Use" - a mode of their Claude 3.5 Sonnet model where it can do things like accept screenshots of a remotely operated computer and send back commands to click on specific coordinates, enter text etc

My notes on what I've figured out so far: https://simonwillison.net/2024/Oct/22/computer-use/