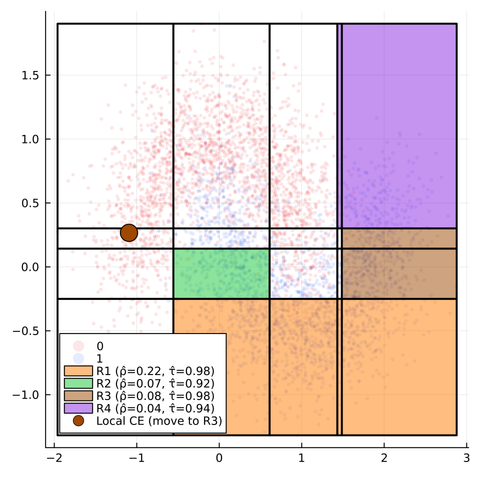

Counterfactual Explanations are typically local in nature: they explain how the features of a single sample or individual need to change to produce a different model prediction. This type of explanation is useful, especially when opaque models are deployed to make decisions that affect individuals, who have a right to an explanation (in the EU).

When we are primarily interested in explaining the general behavior of opaque models, however, local explanations may not be ideal. Instead, we may be more interested in group-level or global explanations.

To address this need, CounterfactualExplanations.jl now has support for Trees for Counterfactual Rule Explanations (T-CREx), the most novel and performant approach of its kind, proposed by Tom Bewley and colleagues in their recent hashtag #ICML2024 paper: https://proceedings.mlr.press/v235/bewley24a.html

Check out our latest blog post to find out how you can use T-CREx to explain opaque machine learning models in hashtag #Julia: https://www.taija.org/blog/posts/counterfactual-rule-explanations/