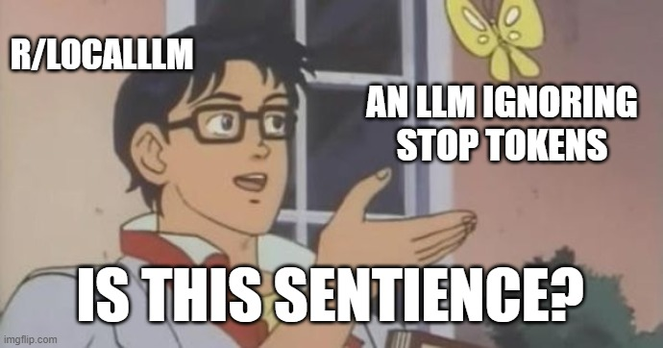

Good morning folks

It's not sentient, it's just an LLM that doesn't know when to shut the fuck up.

I'm not saying that LLMs ignoring stop tokens and just going on their own weird dream-cycle exploration of time and space isn't interesting, but I am absolutely saying it's not sentience.

Basically:

* You ask a question

* The model answers it

* The model ignores (probably a setup issue) the trigger to stop talking built into the source data

* The model then goes 'well... what's next?'

* Probability says that what comes next is user input

* The model simulates the user asking a question

* The model then answers that question

* Cycle continues

* Things go off the rails eventually.

* You ask a question

* The model answers it

* The model ignores (probably a setup issue) the trigger to stop talking built into the source data

* The model then goes 'well... what's next?'

* Probability says that what comes next is user input

* The model simulates the user asking a question

* The model then answers that question

* Cycle continues

* Things go off the rails eventually.

You ever have a coworker who answers a question, poses a question to themselves, answers that question, then keeps going?

Yeah, it's exactly that. They're missing the 'and now I stop talking' cue.

@DarkestKale I have the ability to stop rambling some days! On those days, I'm no longer sentient, I guess.

Kale

Kale