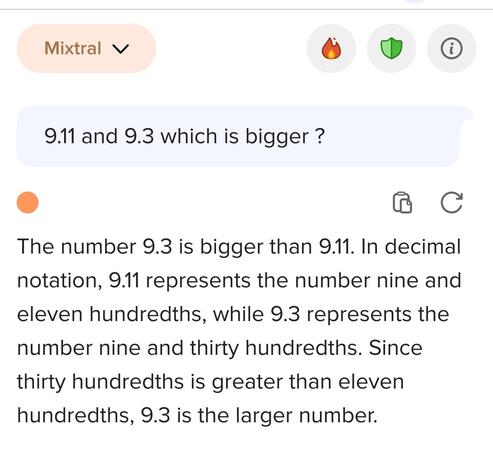

Hmm, well, 9.11 is wider.

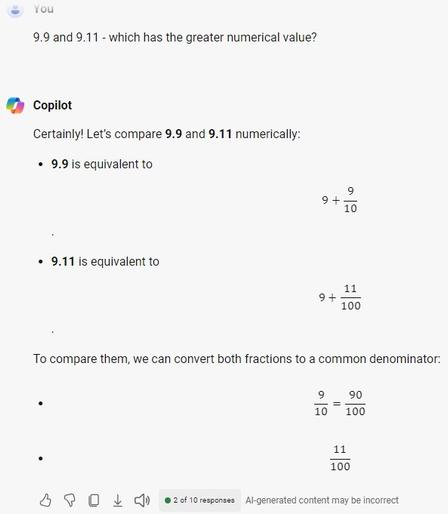

And an answer from Copilot just now:

I'm also trash, I'm bad at math and don't know the multiplication table, let alone multiplying and dividing fractions

Well, for the sake of truth, they write it themselves "ChatGPT can make mistakes. Check important info."

I don't know what you're talking about.

9.11 is clearly bigger than 9.9.

9.11 is 4 characters, and 9.9 is only 3.

#EverythingIsAString

@atoponce I wonder: you know how virtual assistants are given feminine names and voices (Siri, Alexa)? And you know how there is a persistant false belief that women are somehow worse at math than men?

I have to wonder whether that combination of biases has any influence on the programmers who create these LLMs? I mean on top of all of the other biases and misunderstandings they already have about neuroscience and language? Are they creating their own stereotype of a ditzy secretary?

There was an old TV program called "Kids say the Darndest Things" with clips of it shown on The Bill Crosby show (well before he was arrested):

https://www.youtube.com/watch?v=G1voLZyI0SM

Art Linkletter's Kids Say The Darndest Things | 1995 Special with Bill Cosby (CBS)

@Hexa there's always one promptfondler in the thread that doesn't understand that you can't get fully repeatable answers from the confabulation engine, and that any answer to that question is a valid answer within the llm paradigm, no matter if it's incorrect or not.

(there's also another promptfondler who thinks that the problem is just in one particular llm, not in the way llm works)

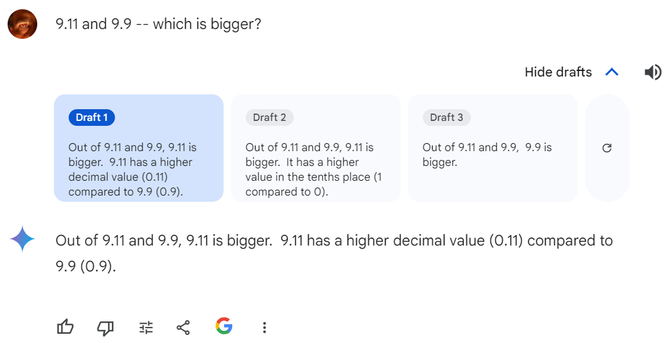

in the meantime, they have fixed this issue. But I think, we only have to dig a little bit deeper now.

seems to vary:

> please calculate 9.9 - 9.10

The result of subtracting 9.10 from 9.9 using Python is approximately 0.8. The small discrepancy (0.8000000000000007) is again due to floating-point arithmetic precision in computers.

anyway, I prefer using a calculator and not an LLM.