Meet Nightshade and Glaze: free tools created by the Chicago University for creators to protect their work from unwanted AI training and AI mimicry.

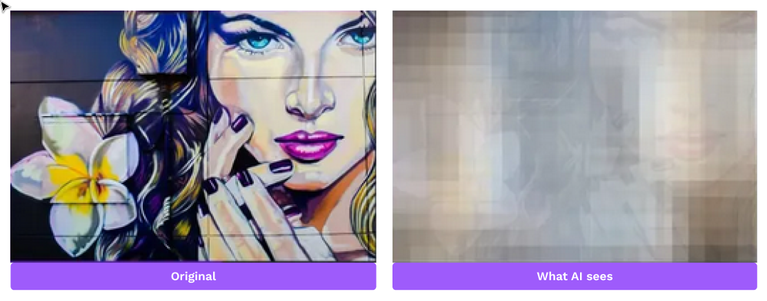

Glaze is a tool to disrupt art style mimicry. It understands how AI models work and computes minimal changes invisible to humans but drastically different to AI. The best thing is that it cannot be easily removed from the artwork with image manipulation.

Credit: @zemotion on X

Ways to protect yourself

✅ Add copyright notice

✅ Make sure copyright-related metadata is included when content is published/shared and not stripped away

✅ Add license and terms

✅ Don't upload high-quality original directly to the platforms

Automate all of this and more with Macula

©️ Macula takes care of the relevant metadata, including AI-related statements

👁️ Share images anywhere without giving away the originals

📈 Gain exposure and grow on your terms

Learn more and sign up today: https://macula.link/