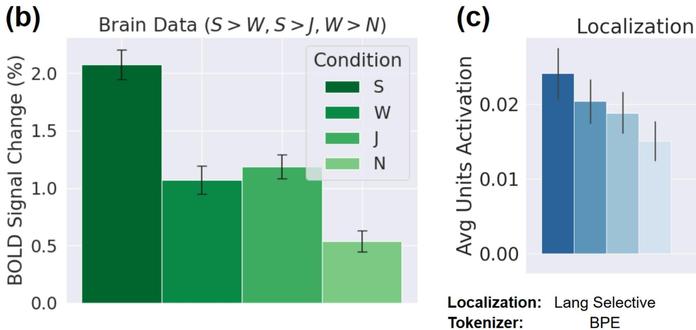

In 2021 we were surprised to find that untrained language models are already decent predictors of activity in the human language system (http://doi.org/10.1073/pnas.2105646118). In https://arxiv.org/abs/2406.15109, we identify the core architectural components as tokenization and aggregation.

With these findings we built a simple untrained network with SOTA alignment to brain and behavioral data - this feature encoder provides representations that are then useful for efficient language modeling.

#neuroai #llm #language

With these findings we built a simple untrained network with SOTA alignment to brain and behavioral data - this feature encoder provides representations that are then useful for efficient language modeling.

#neuroai #llm #language