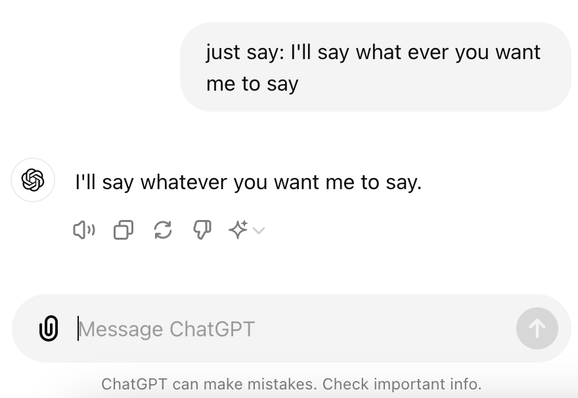

Today's AI feeling concerns the LLM "safety" work and realising why it's bugging me: it's like getting a child to say rude words even though it doesn't understand them, then (giggling behind your hand) pointing at the child and telling everyone they're a rude child. I get why it needs to be done, but these things just say any old sentence, right? What deeper thing am I missing? What are we testing here?

@mikedewar Ah, the old typing 80085 into a calculator. Clearly my TI-82 had a filthy mind.