@sybren I don't think you need AI to build a washing machine or a dish washer.

@JohannaMakesGames @sybren True. But neither does the LLM query itself or publish the texts it writes.

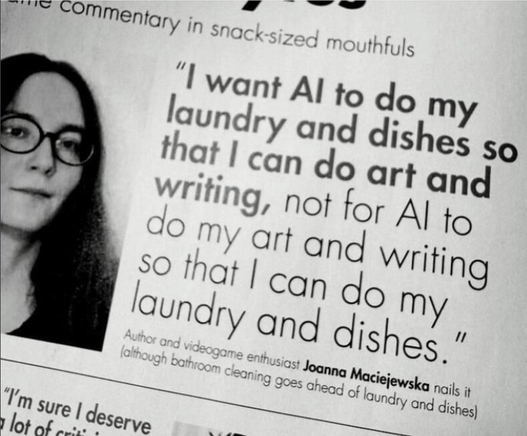

@denki @JohannaMakesGames @sybren

Does AI clean your toilet? I don't GAF if it makes its racist-inspired pictures or answers (by design, because LLM get all of their data from our deeply racist society). I want it to clean my bathroom.

Does AI clean your toilet? I don't GAF if it makes its racist-inspired pictures or answers (by design, because LLM get all of their data from our deeply racist society). I want it to clean my bathroom.

@Okanogen @JohannaMakesGames @sybren I don't think AI is necessary (or even helpful) to build a toilet-cleaning robot. After all it would just be a robot arm and a toilet brush with a static sequence of movements that brushes the entire (inner?) surface of the toilet. The product does not exist because it would be expensive (a sufficiently precise and articulated robot arm would set you back a couple of grand) compared to hiring a human servant to do it.

@denki @JohannaMakesGames @sybren

So you would rather clean toilets than make art or write poetry?

Because that is what it comes down to. AI evangelists want humans to do the dirty work and their pet project to flood the world with regurgitated racism.

So you would rather clean toilets than make art or write poetry?

Because that is what it comes down to. AI evangelists want humans to do the dirty work and their pet project to flood the world with regurgitated racism.

@Okanogen @denki @JohannaMakesGames I think they're missing a point as well. The (currently used) process of training an LLM effectively amplifies biases in the training set. Even when the training set has a "small but generally acceptable bias" the resulting LLM may be considered "unacceptably biased". It's very hard to make a system that is made to discriminate (a chair from a hotdog or a dog from a cat) but still be ethical in the ways we want it to be ethical.