I would like to send every person who uses user data to train an LLM to jail. Ethics jail, where they all must learn basic ethics of user consent and data privacy.

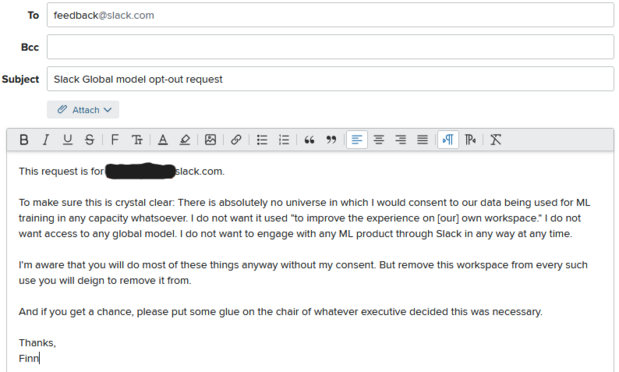

Latest exhibit: Slack is training a *global* model on conversations in your space. To opt out you need to email them. This is unconscionable and is extremely likely to leak private information.