The LinkedIn tone is very much of the "temporarily embarrassed millionaire" variety... "Google should do whatever they like, and I should like it! Because I might be Google one day!"

The Twitter tone is much more polarized, ranging from "Are they demented?" to "Uh, everybody that matters does that." (Meaning, all the abusive market-dominant players.)

It means that they're going from a mix of one-to-one and one-to-many BGP relationships, to purely one-to-one relationships. So, thousands of other networks that they currently have direct connections to, are getting cut off. And being offered the opportunity to petition Google for reconnection. Since it was essentially all the littlest networks that they had one-to-many relationships with, and the littlest networks are already doing the one-to-many thing _for reasons of simplicity and efficiency_, the littlest networks are also the ones least likely to be in a position to petition for reinstatement of those connections. Instead, their traffic to Google (meaning, their customers' traffic to Google, so, not anything they can control) will now reach Google through transit. So it means that the littlest networks will now need to pay to reach Google. And they're not paying Google, they're paying their larger competitors. So, Google's action has the net effect of moving all the small networks further away from them, decreasing their performance, and increasing their costs. At the same time, it enriches the larger networks. This is anticompetitive, and aggravates the "digital divide."

@woody "simplify peering operations"

...by increasing their explicit peer count by who-knows-how-many?

"and increase routing security"

...by not leveraging the routing security some IXes have implemented? 🤨 (I'm looking at you, MICE. 😘)

This seems, yes: backwards. Which is maybe on-brand for Google, these days.

@woody saw this at the tiny SOLIX in Stockholm yesterday. Google has a 30G link there that a single RS peer (INETCOM🇷🇺) was completely saturating all day every day, and that relationship was the bulk of the traffic for the whole IX.

Now that Google no longer accepts the RS routes they're down to under 5Gbps of traffic—the links are no longer saturated. Things got a lot better for those peering bilaterally with Google.

@Drwave @woody overall, no—Google requiring bilateral peering agreements with operators rather than automatically peering with anyone over a route server at an internet exchange will increase the average route distance between Google and eyeball networks and thus makes the Internet slower.

But I highlighted a case where the change had a silver lining because it had a side effect of eliminating congestion on this particular set of 3x10G Google network ports.

That, in itself, isn't a bad thing. Two large networks showing up and peering on the route server, where everybody else has equal access to both of them, is exactly what you want. Even if there's a big scale difference between them and then next largest networks... it _should_ create a fair and open opportunity for growth for all of the networks, equally including the small ones. Which is the best possible situation.

But it depends on the large networks not getting lazy and allowing their ports to get congested, which harms everyone.

Agreed, for the reasons @karppinen says... But again, this was an own-goal on Google's part, because they were trying to cram more traffic than would fit into three 10G ports, instead of just upgrading to a single 100G or 400G port, which would have accommodated the traffic without congestion. Which, again, comes down to laziness on their part. Didn't provision enough bandwidth in the first place, then added another 10G port instead of upgrading, then added ANOTHER 10G port instead of upgrading, then pulled the plug entirely instead of upgrading.

So... Route servers are like hubs, within Internet exchange points, where networks exchange the routing data that allows Internet traffic to flow between networks. The Internet exchange point is like a road intersection, and the routing data is the road signs which tell people which exit to take to get to their destination. Google is saying that they're taking down the road sign that says "get to Google web sites by going this way." They're now only going to tell people (other network operators) that privately. They'll decide which networks they tell, and which they don't. And it's actually a fair bit of work for them to arrange these bilateral relationships ("bilateral peering") across which they provide this information. So, inevitably, what will happen is that Google will only bother to set up these special bilateral relationships with the largest other networks. Once that happens, the largest networks will then charge the smaller ones for access to Google's network, ("You don't know how to get your customers' traffic to Google anymore, but we do... pay us, and we'll get it there") and the smaller networks' customers will receive poorer service than the direct customers of the larger networks, making the smaller networks more expensive, less performant, and less competitive.

Google is within their rights to do this, but it's antisocial, and it creates significant new costs, which are borne exclusively by the smallest networks, and their customers. So it worsens the "digital divide." It needlessly transfers money from small networks to large ones, and doesn't even really save Google any money.

It doesn't _actually_ give them any new capability, it just makes it slightly simpler to differentiate between the peers. So, they can be lazier. Except that now they have to deal with a lot of new peering sessions, which is a lot of work. So, if they're trying to be lazy, my guess is that they're not going to bother with most of those new peering sessions either. Since it's easier not to. And that externalizes a whole lot of expenses onto other networks, and the networks that pay those expenses are the smallest ones, so that makes everything worse.

Some do, and even for those that don't, policies can be enacted based on the next-hop or second-hop ASN. It's not that big a deal, lots of networks do it. It's just not _quite_ as simple as applying policies on a per-session basis.

@woody I worked at a company that took this stance (direct peering only, no route server peering unless required). At the time, responsiveness to IX peering requests was not great, so some folks decided to just static route the appropriate traffic to our exchange address at a few locations. You can imagine how awesome that was when we were trying to take a router out of service. 😆

I can't imagine this going much better for Google.

Yeah... Without question they have the right to do it. And it's hardly an unusual position for a market-dominant network to take. And packets will still get through. However, it will have real costs for the customers of small networks, who will see lower performance (and higher wholesale costs, which will, one way or other, be distributed to customers). And those costs will be unequally divided, since this will affect small networks but not large networks.

So, it worsens the digital divide. Google being evil, if not in a particularly unique way.

@woody The justification at my former employer was "better control over traffic," but I think given a little bit of extra time we could have had the same level of granularity with route servers using AS path filtering.

In hindsight, it was mostly a "because we can, and we can't be bothered to put in more effort" move, which given the size and culture of the company is less than surprising.

It's unfortunately not unusual for market-dominant networks to do this. It's bad, but it's hardly unique. This is just a step in the wrong direction for one of the larger networks, that a lot of people use every day.

I'm pessimistic that there will ever be meaningful net neutrality regulation. And content networks have always managed to lobby to have NN legislation, where it exists, applied only to eyeball networks, not content, so they can further their advantage.

e.g., we were peering with Charter/Spectrum and the idiots wouldn't upgrade the peering link which was still 100mbit so it made life harder for us as we had to deal with routing around it when the link was at capacity

Gotta say, I just started using Kagi, and I'm loving it so far. I tried Neeva, when it was a thing, and it was ok, but I like Kagi a lot more than either Neeva or Google.

Eh, try it, what's to lose? The first hundred queries are free. I've been using it with Orion and watching BlockBlock and Little Snitch closely, and there's been _no_ unexpected chatter. Which is, frankly, shocking in this day and age. In the best possible way.

@woody i think the effect and reasoning behind it very much depends on if they accept private peering for nearly all IX members or not. Getting to know their peering partners better and getting reliable contact data about them could be reasonable points behind this change.

On the URL you show in your image there are a few conditions they mention. I think they don't sound unreasonable. There is just one critical point: "- Sufficient traffic volume (as determined by Google, at its discretion)"

So, what traffic volume is that? Does that also cover the traffic very small ASes have with Google?

* many larger networks are poor at peering,

* the idea that maintaining thousands of extra bilateral sessions is easier in aggregate is fairly laughable, even with config automation,

* read in parallel with the current published Google peering policy, thousands of single-ix-single-connection peers would not be eligible to establish bilateral peering and would be worse off.

@woody I had much the same reaction when we received notice of this programme a few months ago as a LONAP peer.

However, in practice;

* through their portal (much as I am not a fan of every bloody network making me sign up for their portal) they were responsive,

* the portal is nowhere near as much hassle as the azure one was,

* they actually turned up peering with us no questions asked despite not meeting their stated policy of minimum 2 locations

I guess the policy is out of date?

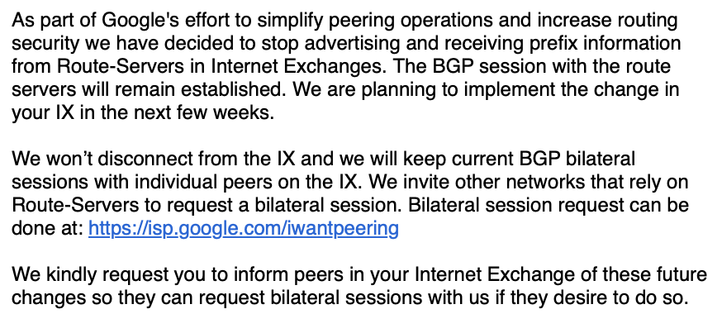

⬇️ "As part of Google's effort to simplify peering operations and increase routing security we have decided to stop advertising and receiving prefix information from Route-Servers in Internet Exchanges. The BGP session with the route servers will remain established. We are planning to implement the change in your IX in the next few weeks.

We won't disconnect from the IX and we will keep current BGP bilateral sessions with individual peers on the IX. We invite other networks that rely on Route-Servers to request a bilateral session. Bilateral session request can be done at: https://isp.google.com/iwantpeering

We kindly request you to inform peers in your Internet Exchange of these future changes so they can request bilateral sessions with us if they desire to do so."