@mrtazz @asociologist @debcha “Making systems resilient is fundamentally at odds with optimization, because optimizing a system means taking out any slack. A truly optimized, and thus efficient, system is only possible with near-perfect…”

Sing it friend.

America works too hard.

We apply the reasoning of manufacturing efficiency, where it might be a Good Idea under certain circumstances, to knowledge work, where it is a terrible idea.

@mrtazz @debcha IMO this is the core problem rotting away the software industry from the inside.

We're so far up the abstraction tree that I'm not sure that many people have the slightest clue what's more than a few levels down. The system is inherently *un*knowable and this leads to very bad systems built on very bad systems built on very bad systems built on--

@mrtazz @debcha yup, efficiency reduces redundancy. redundancy is diversity and a diverse ecology is a healthy ecology.

less redundancy means more vulnerability.

you can say efficiency is optimizing for one risk to the detriment of all others.

not smart in the long run. but who's thinking in more than 6-month increments nowadays?

Hooray for a really inefficient way to reduce people’s energy use - Granite Geek

The couch in my living room is against an outside wall, right under a window. I have spent three decades trying to eliminate the draft against the back of my neck when I sit there. WindowDressers might have an answer. Better than that: They go about it in an interesting, if inefficient, manner. The volunteer-based […]

If you have found a way to remove any security-related impediment then you have bypassed security.

> For a system to be reliable, on the other hand, there have to be some unused resources to draw on when the unexpected happens.

I have been saved *multiple* times by having "pipe 5 gigs of /dev/zero into a file" be part of my standard setup on any important bare-metal systems, so when everything is burning because something unexpectedly ate all your disk space you can just delete the file and immediately have breathing room.

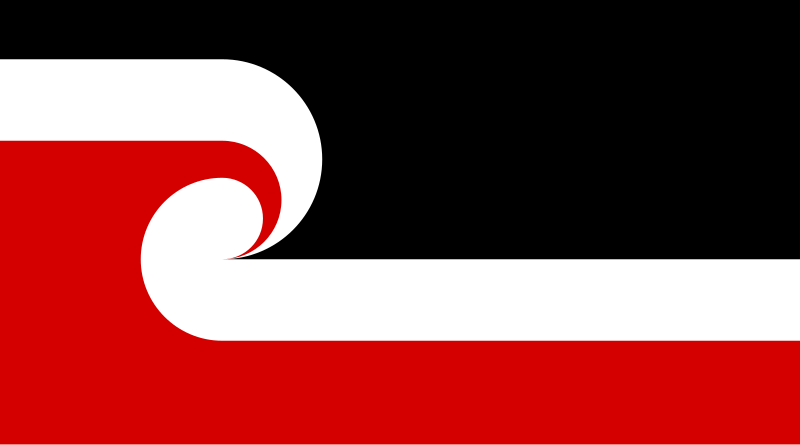

🇳🇿

🇳🇿