Happy to share our paper:

Genie🧞: Achieving Human Parity

in Content-Grounded Datasets Generation

was accepted to #ICLR24

From your content

Genie creates content-grounded data

of magical quality ✨

Rivaling human-based datasets!

https://arxiv.org/abs/2401.14367

#data #NLP #nlproc #ML #machinelearning #llm #RAG a

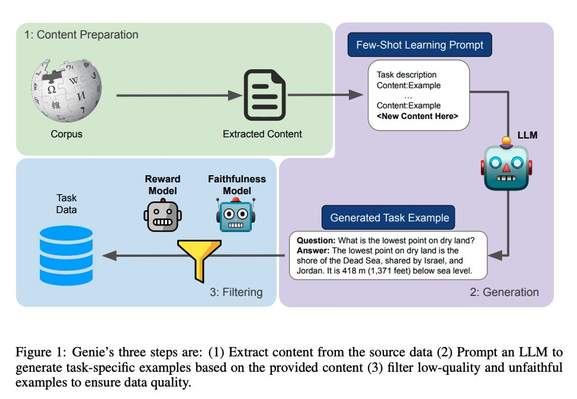

Genie: Achieving Human Parity in Content-Grounded Datasets Generation

The lack of high-quality data for content-grounded generation tasks has been identified as a major obstacle to advancing these tasks. To address this gap, we propose Genie, a novel method for automatically generating high-quality content-grounded data. It consists of three stages: (a) Content Preparation, (b) Generation: creating task-specific examples from the content (e.g., question-answer pairs or summaries). (c) Filtering mechanism aiming to ensure the quality and faithfulness of the generated data. We showcase this methodology by generating three large-scale synthetic data, making wishes, for Long-Form Question-Answering (LFQA), summarization, and information extraction. In a human evaluation, our generated data was found to be natural and of high quality. Furthermore, we compare models trained on our data with models trained on human-written data -- ELI5 and ASQA for LFQA and CNN-DailyMail for Summarization. We show that our models are on par with or outperforming models trained on human-generated data and consistently outperforming them in faithfulness. Finally, we applied our method to create LFQA data within the medical domain and compared a model trained on it with models trained on other domains.