New Paper Alert! 🚨 Ever wondered what shapes #NLProc research over time?

We explore the evolution of research in our #EMNLP2023 paper – and, delve into the »WHEN, HOW, and WHY« of paradigm shifts in Natural Language Processing. (1/🧵)

A Diachronic Analysis of Paradigm Shifts in NLP Research: When, How, and Why?

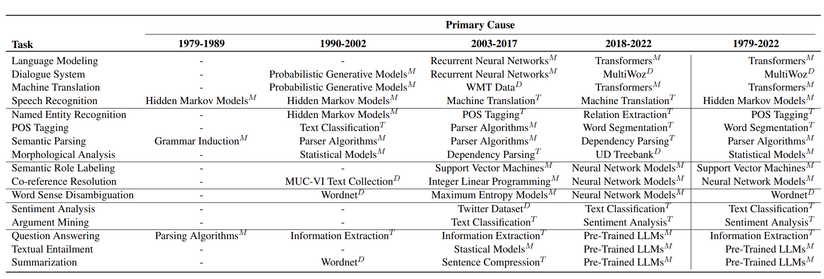

Understanding the fundamental concepts and trends in a scientific field is crucial for keeping abreast of its continuous advancement. In this study, we propose a systematic framework for analyzing the evolution of research topics in a scientific field using causal discovery and inference techniques. We define three variables to encompass diverse facets of the evolution of research topics within NLP and utilize a causal discovery algorithm to unveil the causal connections among these variables using observational data. Subsequently, we leverage this structure to measure the intensity of these relationships. By conducting extensive experiments on the ACL Anthology corpus, we demonstrate that our framework effectively uncovers evolutionary trends and the underlying causes for a wide range of NLP research topics. Specifically, we show that tasks and methods are primary drivers of research in NLP, with datasets following, while metrics have minimal impact.