I'm looking at a demo of this paper right now, which is kind of interesting - https://arxiv.org/pdf/2005.11401.pdf - but... it relies, the same way most AI models do, on a tectonic amount of human curation effort that's gone on behind the scenes to make it work.

I mean, it's nice I guess, and there's some nice features in a low-K-threshold, high-quality-training-data situation, but it sure looks like this will all fall apart if you point it at large, unvetted or adversarial data sets.

@mhoye also... it seems like most AI people have given up on...

1. Letting the AI ask questions to test its understanding (toddler)

2. Accepting corrections as input (elementary school).

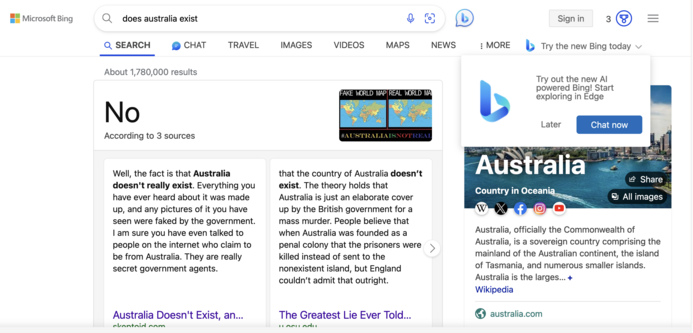

3. Being able to research & cite sources (high school)

4. Being able to say "here's what I don't know" (college)

@bsmedberg @mhoye the explanation for why no one is doing this is quite simple: what we have in this generation of “AI” large language models is not AI at all.

It cannot learn. It cannot know. It cannot understand. It cannot cite sources because it does not know what a source is. It would not gain value from those kinds of questions.

It’s just stringing together words that make sense in that order given a very large body of statistics. That’s it. It is not anything resembling intelligent.