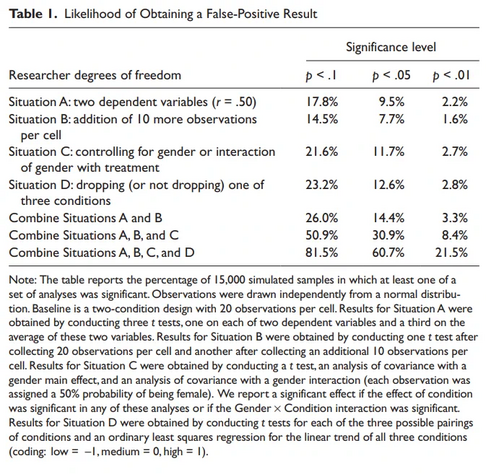

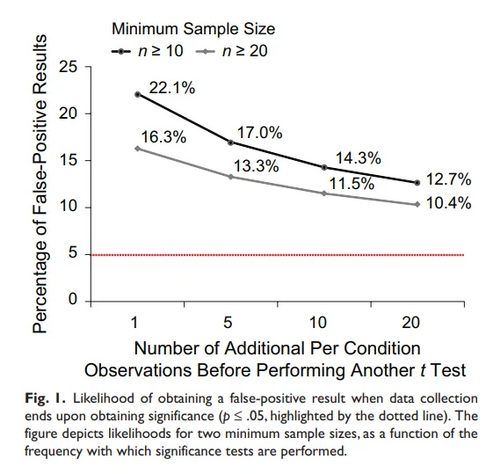

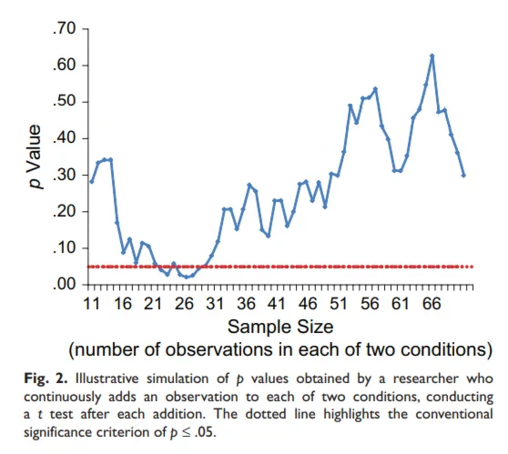

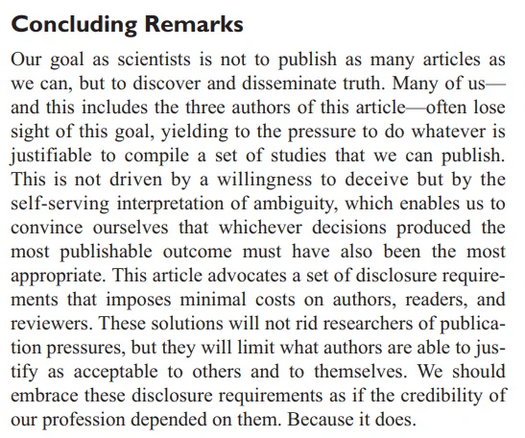

If you exercise some degrees of freedom in conducting your experiment, you can make almost any stupid hypothesis (appear to) satisfy p<0.05.

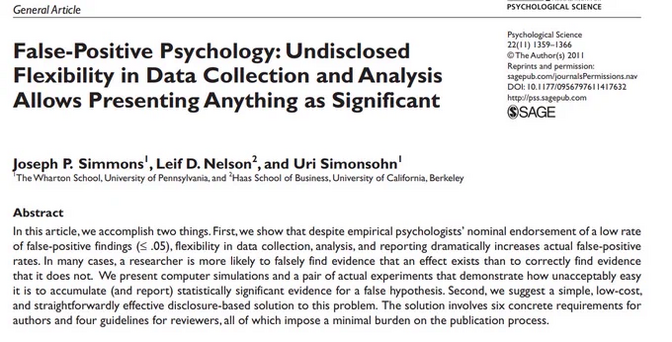

False positive rates can be as high as 60%.

These researchers (appeared to) prove, to p<0.05, that listening to kids music made participants 1.5 years younger.