Oh.

@lizzard @nicol @rosamundi

I think you would be wrong to think about such a thing as evil. A truly intelligent machine told to, say, "make as many books about fungi as possible" but not told "...but don't kill or harm any humans" might think about what could stop it making as many books as possible and make a rational decision to kill all humans, hide it's existence, or find some other way to prevent it from being switched off, but I don't think it would be reasonable to call it evil. It's simply making as many books about fungi as it can and possibly looking at humans as a source of, or competition for materials.

Something less intelligent though. Killing humans not deliberately as a survival strategy but through accident or attempting to simulate a human. Let me think about some specific examples.

@lizzard @nicol @rosamundi

number one example for me is the principal antagonist in the Paranoia TTRPG, Friend Computer. Friend Computer issues lethal and contradictory commands not out of malice but through incompetence.

There are the Greenfly Terraformers of Alastair Reynolds' Revelation Space series. Rogue terraforming machines that turn everything into lush green habitats.

Trying to think of examples that aren't malfunctioning in some way. The real life examples are working perfectly correctly in that they're making or saving money and externalising costs.

You have to specify everything to utmost detail, because the Thing doesn't use / know context.

For us it's obvious not to lie, thus kill people, thus anger people, thus cause them to switch me off, etc.

And this is caused by bad memory management, thus lack of "instinct".

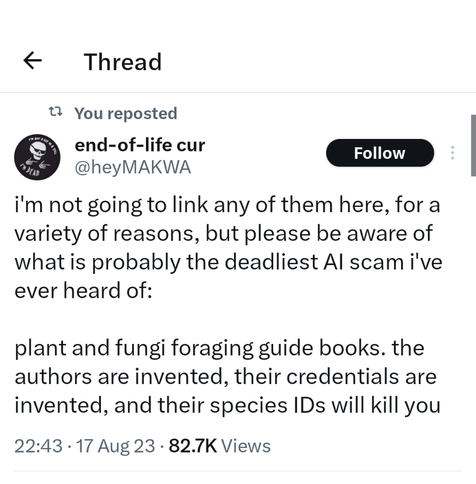

ChatGPT lied to me no problem. After pointing it out, lied some more.

if it's breaches of asimov's laws you're looking for book 2 of Ton 10 by Alan Moore has quite a nice one.