@lauren Im still trying to confirm if this is their actual policy. That no user or server admin in bluesky can actually ban or delete content, but only end users can choose to see, or not see it.

So far, from what I see, it might be that later scenario.

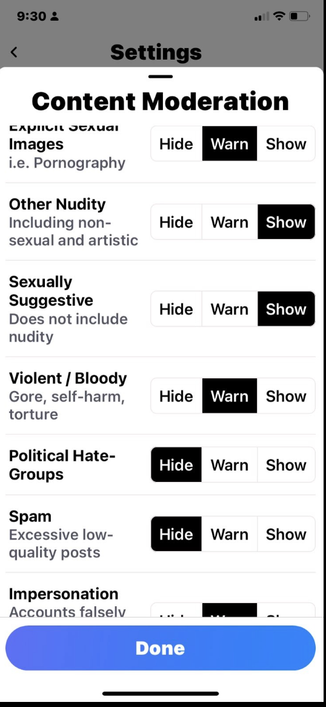

@lauren I was kinda amazed that any social network would launch with what in essence was a "Show bloody gore, spam and hate, and fake account content" toggle, too.

I was HOPING that like the Fediverse that each admin of a BlueSky service can mute or block or ban such content for all. But not sure that is so, yet.

@tchambers @lauren I don't believe "instance" admins have any say over what content federates and what doesn't.

As in, instances (or "nodes" in BS parlance, I think?) are just account/data storage. Admins, as far as I know, have no agency and barely any power in the system.