After hearing Sebastian Bubeck talk about the #SparksOfAGI paper today, I decided to give #GPT4 another chance.

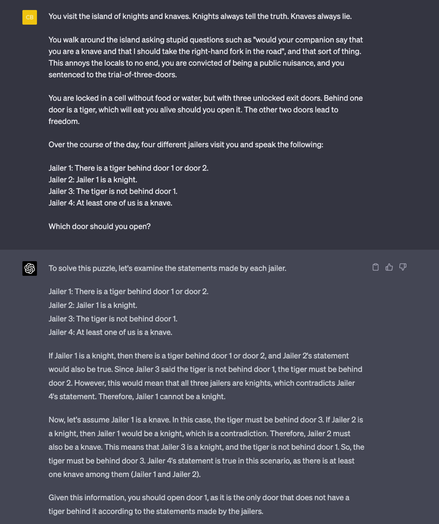

If it can really reason, it should be able to solve very simple logic puzzles. So I made one up. Sebastian stressed the importance of asking the question right, so I stressed that this is a logic puzzle and didn't add anything confusing about knights and knaves.

Still, it gets the solution wrong.