@BuildSoil Not really. The best way to think about it is to consider how that first layer slices up the (x,y) plane if the activation are like hard step functions. Without the bias, the boundaries of neuron activation will always go through the origin. With the bias, it can slice any line.

@R4_Unit ok but with Mandelbrot x² + c adjustments to C don’t go through the origin either of us, but it still goes back-and-forth between a cycle of growing exponentially, and then being pulled or pushed by some value

@BuildSoil fair but I guess then they are similar since both add. I guess the most important difference is that most neural networks don’t feed through the same weights repeatedly, so we expect no similar dynamical properties. In fact, chaotic behavior in neural networks is associated with them being hard to optimize, so I guess it depends on what you consider “like c” to mean…

@R4_Unit are you sure about that? In an ecosystem model we do exactly the same. After adjusting r values and weights for each link the models runs from left to right taking in sunlight and site conditions each elements attempts autocatalyric growth, when then grows their herbivores, then predators. The populations of those predators then feedback adjusting the populations from right to left

@R4_Unit in these ecologies there is an evolution or you just made a coefficient over time, but in the short run, there is an adjustment population in the intermediate layers

@R4_Unit learning/training is clearly dynamical when you are seeking minima

@BuildSoil I think I still need to know what you mean by “like C”. What property does C have that you want to know if the bias has it too? Certainly it sounds like these ecological models are somewhat similar, and the optimization dynamics of even very simple neural networks can exhibit chaotic and bifurcation phenomena, so if that is what you mean, then yes!

@R4_Unit in the Julia sets you square and then move by some amount. Akin to an adjusted r value in population models.

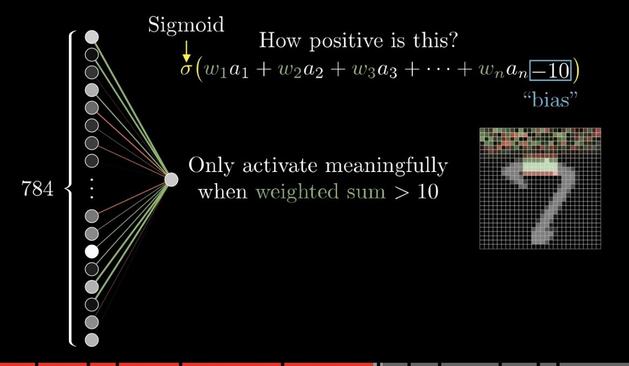

equations used in neural nets to express the influence of each neuron on the next, I see the value of the next layer is all of the things it “eats”, adjusted by some amount and then grown logistically, just like we would do in a pop model. Though I do wonder if you could just have the predation of the next layer, giving the same affect like in Lotka Volterra

@R4_Unit btw thank you so much for engaging with me. I’m trying to understand the relationship here

@BuildSoil happy to! Analogies are the lifeblood of science, so bringing intuitions from one to the other can be a powerful tool!

@BuildSoil I think there are many similarities, but the fact that one is designed to be viewed dynamically makes a difference to the interpretation I give it. In neural networks, the training dynamics (where weights are changed) and the prediction dynamics (where you pass data through to make predictions) are often viewed separately, since you can change the way you train without changing how you predict.

@BuildSoil In most neural networks (unless recurrent like population models) the biases are used only once, so they can’t produce the interesting phenomena you see. During training by gradient descent, it is (as you’ve said) inherently recurrent, and in that case then yes they are very similar indeed!

@BuildSoil This question of predation is a very interesting one! So one of the problems that people talk about in current artificial neural networks is that gradient descent seems like it cannot be directly implemented in biological neurons (as currently understood). One of the proposals is that competition between neurons can serve as a method to update weights (see eg here: https://arxiv.org/pdf/1806.10181.pdf)