#lookingback #2010to2020

Here comes the thread to dread. Looking back at a few of the things I made during the past decade.

#lookingback #2010to2020

#lookingback #2010to2020

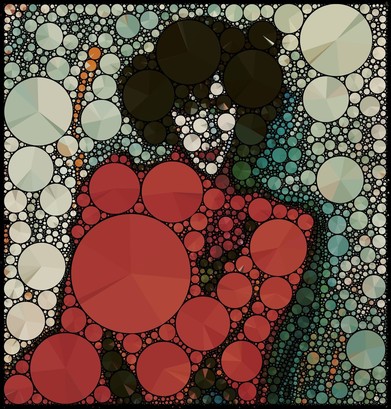

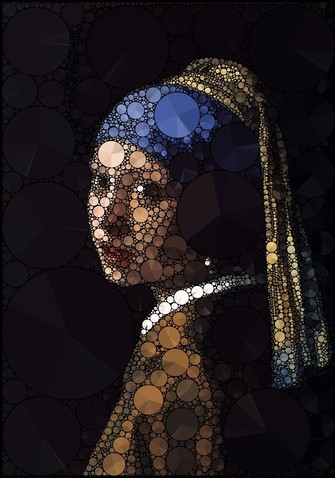

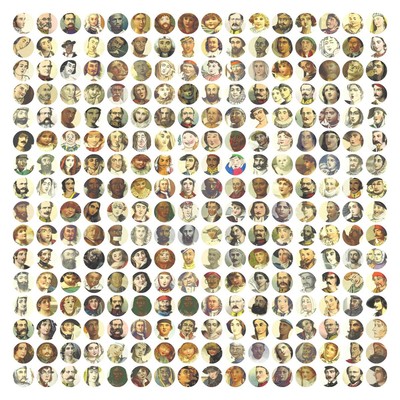

Back in 2010 I was deep in love with computational geometry and in particular with circle packing. One of the more popular projects was "Pie Packing" in which I remade paintings with packed pie diagrams that showed the distribution of the colors they covered.

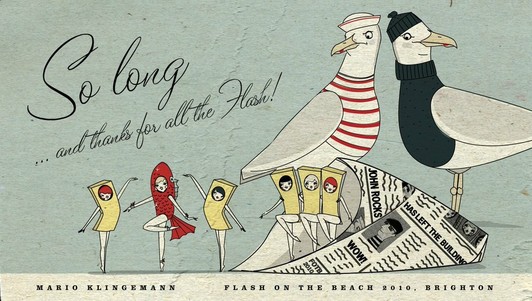

I was still making everything in Flash then. However, at the end of that year I decided to take a break from the speaking circuit and my final talk was titled "So long ... and thanks for all the Flash!" - some foresight in hindsight.

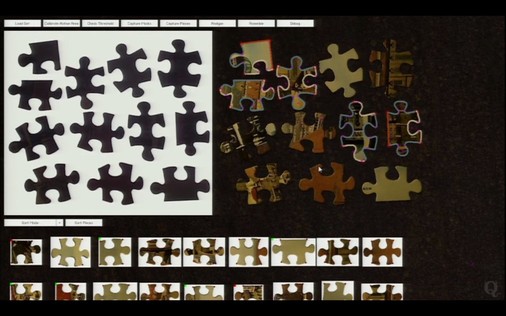

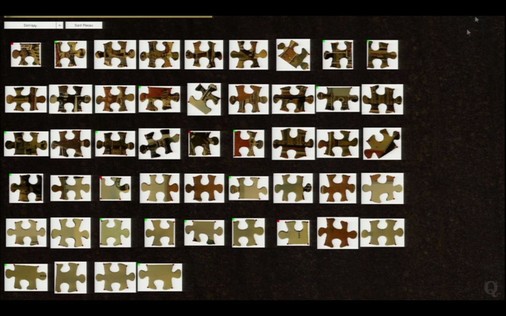

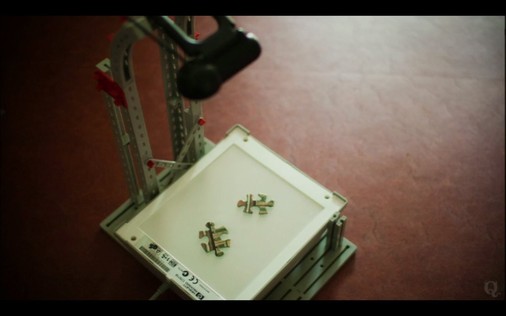

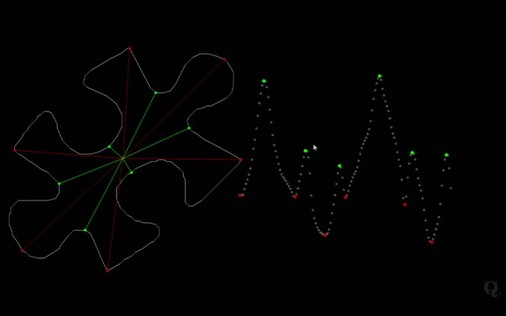

In that talk, in which I forced people to watch a several minute long clip of me solving a jigsaw puzzle, was about my attempts to write a program that would automatically do that job. Which was using all my little machine learning knowledge I had at that time.

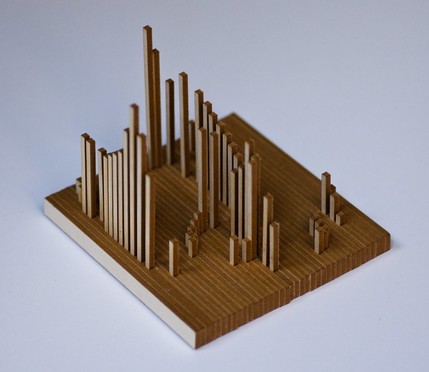

2011 was about making. After having seen the fantastic things my friend Jared Tarbell had been creating with his laser cutter I wanted to get my hands on one, too. Luckily some other people in Munich had similar desires and we founded @[email protected] in the basement of my studio.

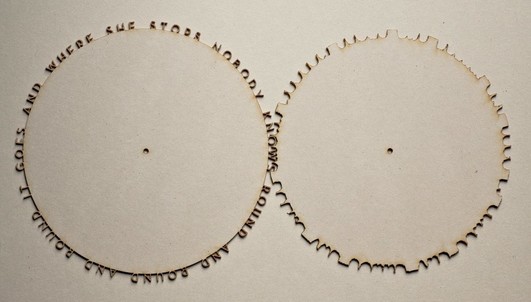

@[email protected] One of the directions was combining coding with making physical objects. Like playing with throwing shadows or making gears from type that do work.

@[email protected] In that year I also made "Like This" - a very democratic artwork that shows everyone how many others have already liked it.

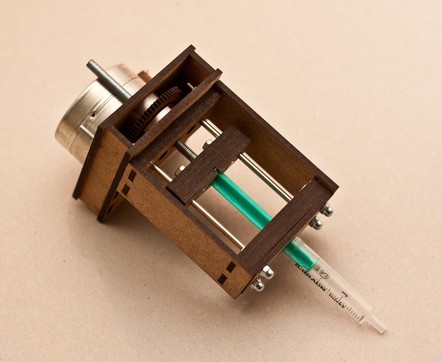

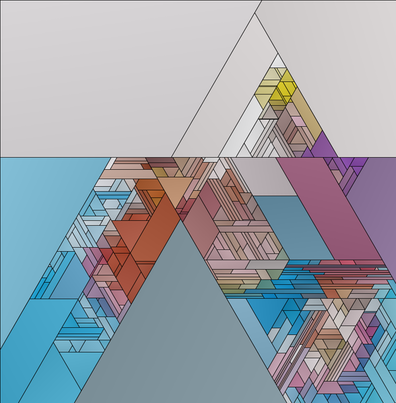

@[email protected] 2012 seems to have been a mixed bag of making physical things like "Serendripity" an ink drop painting machine, exploration of cellular automata feedback loops and computational geometry.

@[email protected] 2013 is when I started to focus on machine learning and image classification. It began with the @[email protected] dumping 1 million unclassified images on Flickr commons. Then still without the help of neural networks I assisted in labeling a few hundred thousands of them.

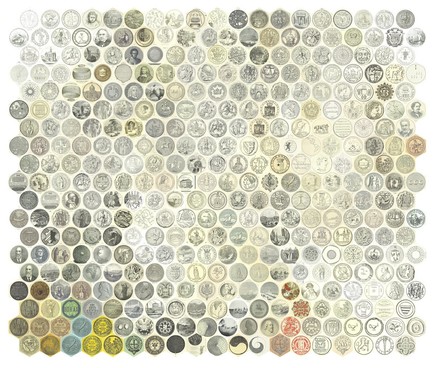

@[email protected] @[email protected] In 2014 I started to look for ways to deal with the problem how to arrange huge numbers of images in a meaningful way and learned about the difficulty to decide what "similar" means.

@[email protected] @[email protected] In the same year I also got my first (and so far last) opportunity to work with industrial robots. For a shop window I made a choreography for two of those. Unfortunately I only found a test clip of the PONG sequence.

@[email protected] @[email protected] Speaking of bots - 2014 was also the year where @[email protected] was born, following the legendary and now suspended @[email protected] which inspired a whole army of twitter art bots. In particular the #bottobot ping pong sessions between the bots where amazing to watch.

@[email protected] @[email protected] @[email protected] @[email protected] In the same year I also got my first chance to show work in a museum. For "The Life of Things / The Things of Life" at the Residenzschloss Dresden I used machine learning to analyze the relationships of 99 bowls and created several data visualizations from that.

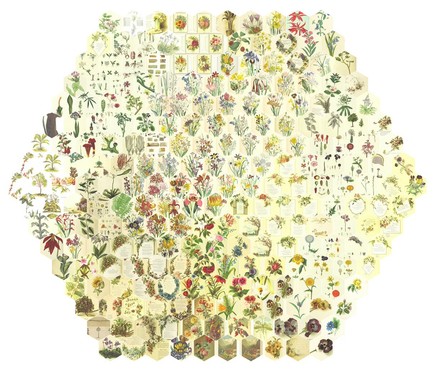

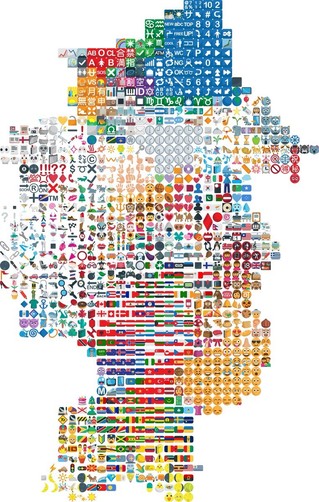

@[email protected] @[email protected] @[email protected] @[email protected] 2015 continued with clustering and arranging. But now it became "deep" since I learned how to use Caffe and the feature vectors from neural image classification networks.

Emojis and Album covers arranged by similarity.

Emojis and Album covers arranged by similarity.

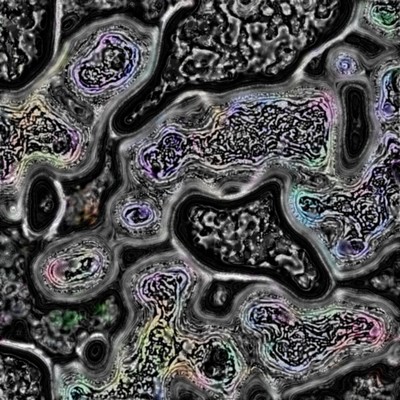

@[email protected] @[email protected] @[email protected] @[email protected] The most important event of that year for me was the release of #DeepDream. It was opening the door for visual manipulation of images with deep neural nets and the birth of the new wave of #aiart.

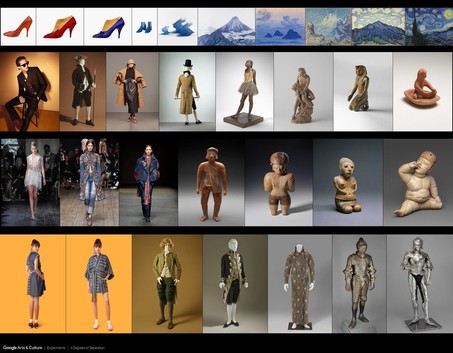

@[email protected] @[email protected] @[email protected] @[email protected] In 2016 I began my artist residency at the @[email protected] lab. It was an amazing experience and gave me the opportunity to collaborate with a brilliant team of artists and engineers. During that time I made "X Degrees of Separation".

@[email protected] @[email protected] @[email protected] @[email protected] @[email protected] Before #GAN became a household name there were other methods that allowed to generate images with neural networks. #PPGN or "Plug-and-play generative networks" is what I used at the beginning. The state of the art was low resolution and very uncanny.

@[email protected] @[email protected] @[email protected] @[email protected] @[email protected] Almost forgot that in 2016 I also released #RasterFairy which was my proposed solution the earlier mentioned arrangement to grids problem.

https://twitter.com/quasimondo/status/690533177903992832?s=20

https://twitter.com/quasimondo/status/690533177903992832?s=20

@[email protected] @[email protected] @[email protected] @[email protected] @[email protected] 2017 is when things got serious. I totally missed out on #DCGAN - maybe it was because I lacked the necessary hardware to train my own models. So #pix2pix became my door to the world of #GANs. I started with some next-frame-prediction experiments.

https://twitter.com/quasimondo/status/817382760037945344?s=20

https://twitter.com/quasimondo/status/817382760037945344?s=20

@[email protected] @[email protected] @[email protected] @[email protected] @[email protected] My biggest issue with those early neural imagery was always the low resolution. I couldn't imagine that people would dare to show this kind of low quality work in an art context. So I began my upscaling quest which resulted in #transhancement. https://twitter.com/quasimondo/status/820027595429449732?s=20

@[email protected] @[email protected] @[email protected] @[email protected] @[email protected] Looks like that January 2017 was very productive since I also started training my pose-to-image model which later became part of #MyArtificialMuse

https://twitter.com/quasimondo/status/825306300619825152?s=20

https://twitter.com/quasimondo/status/825306300619825152?s=20

@[email protected] @[email protected] @[email protected] @[email protected] @[email protected] The rest of that year was mostly about experimenting with model architectures and training methods to get more details and better textures and of course to further explore the strange. It's also marked by the ongoing battle against "The Artifact".

https://twitter.com/quasimondo/status/825306300619825152?s=20

https://twitter.com/quasimondo/status/825306300619825152?s=20

@[email protected] @[email protected] @[email protected] @[email protected] @[email protected] The rest of that year was mostly about experimenting with model architectures and training methods to get more details and better textures and of course to further explore the strange. It's also marked by the ongoing battle against "The Artifact".

https://twitter.com/quasimondo/status/931673425541812225?s=20

https://twitter.com/quasimondo/status/931673425541812225?s=20

@[email protected] @[email protected] @[email protected] @[email protected] @[email protected] In the beginning of 2017 I gave a rough outline of what later would be known as #DeepFake.

https://twitter.com/quasimondo/status/827901041349890049?s=20

https://twitter.com/quasimondo/status/827901041349890049?s=20

@[email protected] @[email protected] @[email protected] @[email protected] @[email protected] By the end of 2017 my efforts to improve resolution were obliterated by two major breakthroughs: in short succession @[email protected] first showed their highly realistic celebrities made with #PGAN and followed up with #pix2pixHD shortly after.

https://twitter.com/quasimondo/status/928604109602770945?s=20

https://twitter.com/quasimondo/status/928604109602770945?s=20

@[email protected] @[email protected] @[email protected] @[email protected] @[email protected] @[email protected] 2018 was the year when #AIart got everyone's attention. Whilst in the years before there were already a few shows featuring this kind of work now it got everywhere.

I was quite proud to show "Neurography" at the @[email protected] and "The Uncanny Mirror" at the Seoul Biennale.

I was quite proud to show "Neurography" at the @[email protected] and "The Uncanny Mirror" at the Seoul Biennale.

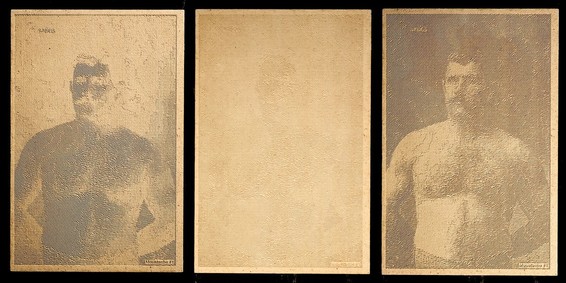

@[email protected] @[email protected] @[email protected] @[email protected] @[email protected] @[email protected] @[email protected] To my great surprise that year I won the @[email protected] gold for "The Butcher's Son".

https://twitter.com/quasimondo/status/1024584013711851520?s=20

https://twitter.com/quasimondo/status/1024584013711851520?s=20

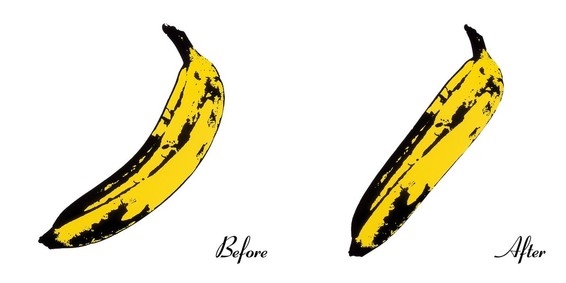

@[email protected] @[email protected] @[email protected] @[email protected] @[email protected] @[email protected] @[email protected] @[email protected] My inability to just shut up about "that" work that shall not be named got my art into the @[email protected] - still wonder how it would have fared without the extra press generated by that controversy.

@[email protected] @[email protected] @[email protected] @[email protected] @[email protected] @[email protected] @[email protected] @[email protected] @[email protected] In 2018 I also got asked a question by a collector that is probably every artist's dream: "What is it you would you like to make, but could not so far?" So I got commissioned to create "Memories of Passersby".

@[email protected] @[email protected] @[email protected] @[email protected] @[email protected] @[email protected] @[email protected] @[email protected] @[email protected] 2019 was probably the "you reap what you sow" year. The problem with "success" or however you might call it is that it is so damn time consuming. In hindsight it feels like I did not get as much work done as I wish I had and my time to experiment took a major dent.

@[email protected] @[email protected] @[email protected] @[email protected] @[email protected] @[email protected] @[email protected] @[email protected] @[email protected] But I am complaining on a high comfort level since I got some real amazing opportunities this year. It started with making visuals for the #MassiveAttack tour.

https://twitter.com/quasimondo/status/1090174818962587648?s=20

https://twitter.com/quasimondo/status/1090174818962587648?s=20