Intel AMXの効果を oneDNNと OpenVINO GenAIで確認してみてみた

https://qiita.com/shirok/items/e6f8f5f7867d335814dd?utm_campaign=popular_items&utm_medium=feed&utm_source=popular_items

@pytorch 2.4 upstream now includes a prototype feature supporting Intel GPUs through source build using #SYCL and #oneDNN as well as a backend integrated to inductor on top of Triton - enabling a path for millions and millions of GPUs through #oneAPI for #AI.

Lots of important milestones to make this happen - including support for #UXL Foundation open AI technologies. Just a prototype, but a big step forward... thanks to all in the PyTorch community. Feedback welcome!

PyTorch 2.4 Release Blog

We are excited to announce the release of PyTorch® 2.4 (release note)! PyTorch 2.4 adds support for the latest version of Python (3.12) for torch.compile. AOTInductor freezing gives developers running AOTInductor more performance-based optimizations by allowing the serialization of MKLDNN weights. As well, a new default TCPStore server backend utilizing libuv has been introduced which should significantly reduce initialization times for users running large-scale jobs. Finally, a new Python Custom Operator API makes it easier than before to integrate custom kernels into PyTorch, especially for torch.compile.

.@IntelSoftware @IntelDevTools #oneDNN 3.1 Further Optimizing For #SapphireRapids, Starts Tuning For #SierraForest

-- Lots of improvements in oneDNN 3.1!

https://www.phoronix.com/news/Intel-oneDNN-3.1

Original tweet : https://twitter.com/phoronix/status/1641898116151496731

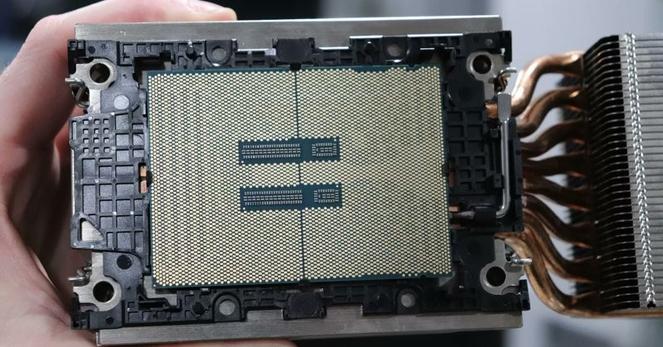

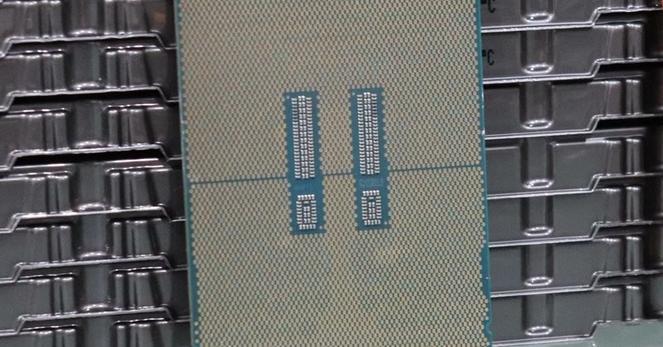

.@Intel Advanced Matrix Extensions [AMX] Performance With Xeon Scalable #SapphireRapids

-- The big #AI performance uplift and power efficiency benefits from #AMX w/ #oneAPI #oneDNN & #OpenVINO benchmarks

https://www.phoronix.com/review/intel-xeon-amx

Original tweet : https://twitter.com/phoronix/status/1615054211582164993

.@IntelDevTools Releases #oneAPI #oneDNN 3.0 In Advance Of #SapphireRapids

-- Plus optimizations for other Intel CPUs & GPUs, including initial Granite Rapids support.

https://www.phoronix.com/news/Intel-oneDNN-3.0

Original tweet : https://twitter.com/phoronix/status/1605147559525335041

#Intel #oneAPI @IntelDevTools @IntelSoftware #oneDNN 3.0 Being Prepared With More Performance Optimizations

-- Plus optimizations for Arm, Power, NVIDIA & AMD hardware too.

https://www.phoronix.com/news/Intel-oneDNN-3.0-RC

Original tweet : https://twitter.com/phoronix/status/1599012110301679616

.@IntelSoftware #oneDNN 2.7 Released With Sapphire Rapids & DG2 Optimizations, #AMD GPU Bringup

https://www.phoronix.com/news/Intel-oneDNN-2.7-Released

Original tweet : https://twitter.com/phoronix/status/1575047333707776000

Qiita - 人気の記事

Qiita - 人気の記事