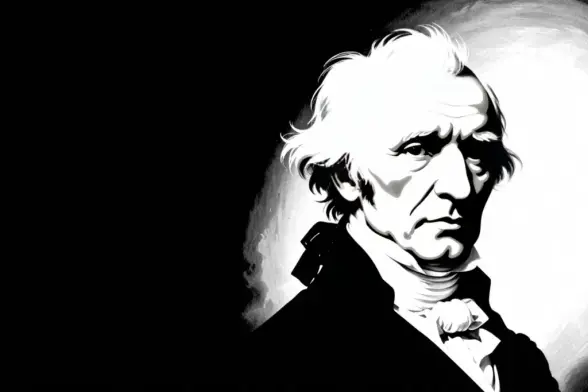

“Alexander Von Humboldt” by Friedrich Georg Weitsch

According to records, the painting "Alexander Von Humboldt" by Friedrich Georg Weitsch was created in 1799 and is an oil on canvas work measuring approximately 120 x 90 cm. The portrait depicts Humboldt at the age of 34, surrounded by symbols of his scientific pursuits and travels. This is how the EventHorizonPictoXL image generation model "sees" the "Alexander Von Humboldt" painting by Friedrich Georg Weitsch. Text model: llama3 Image model: EventHorizonPictoXLhttps://ai.forfun.su/2026/04/18/alexander-von-humboldt-by-friedrich-georg-weitsch-2/