Three glass cubes containing colored foils are rotating.

A sea anemone, fish swimming by, and red algae. No Aliens in this image!

One yellow fish in the aquarium.

Brain Activity

March 2026 - A video (https://doi.org/10.1101/649822) of a brain MRI is slit-scanned with color dispersion and fed to the YOLO object recognition model. Activations in YOLO's 7th backbone layer are used to modulate alpha transparency and luminance in a 3D render of the slit-scanned video as a volume (of width x height x time). The accompanying music is made by injecting embeddings from the CLIP image description model running on the resulting video into the conditioning pathway of Facebook's MusicGen generative music model.

#slitscan #compuationalart #brain #generativeart #deeplearning #abstract #videoart #musicgen #yolo #clip #MRI

March 2026 - A video (https://doi.org/10.1101/649822) of a brain MRI is slit-scanned with color dispersion and fed to the YOLO object recognition model. Activations in YOLO's 7th backbone layer are used to modulate alpha transparency and luminance in a 3D render of the slit-scanned video as a volume (of width x height x time). The accompanying music is made by injecting embeddings from the CLIP image description model running on the resulting video into the conditioning pathway of Facebook's MusicGen generative music model.

#slitscan #compuationalart #brain #generativeart #deeplearning #abstract #videoart #musicgen #yolo #clip #MRI

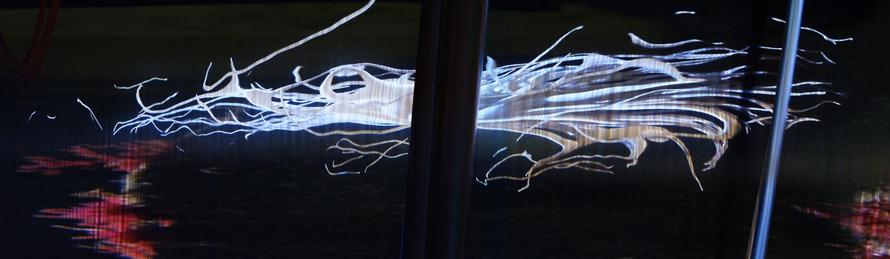

Holographic

Slit-scan video of people moving in Amsterdam Centraal railway station in August 2021, rendered as a volume, the transparency of pixels is determined by activations of a layer of the Ultralytics YOLO model run on the video. The music is generated by activations of a neural layer of OpenAI's CLIP image description model injected into Facebook's MusicGen generative model for 3 second chunks of this video.

#generativeart #computationalart #slitscan #videoart #abstractstreet #amsterdamcentraal #amsterdam #netherlands #deeplearningart

Slit-scan video of people moving in Amsterdam Centraal railway station in August 2021, rendered as a volume, the transparency of pixels is determined by activations of a layer of the Ultralytics YOLO model run on the video. The music is generated by activations of a neural layer of OpenAI's CLIP image description model injected into Facebook's MusicGen generative model for 3 second chunks of this video.

#generativeart #computationalart #slitscan #videoart #abstractstreet #amsterdamcentraal #amsterdam #netherlands #deeplearningart

Timeslices

People walking in front of Tokyo's Shinagawa station, January 2026.

Video rendered as a width x height x time volume and cut up in 100 semitransparent slices.

#videoprocessing #computationalart #slitscan #3d #tokyo #abstractstreet #japan #video #videoart

People walking in front of Tokyo's Shinagawa station, January 2026.

Video rendered as a width x height x time volume and cut up in 100 semitransparent slices.

#videoprocessing #computationalart #slitscan #3d #tokyo #abstractstreet #japan #video #videoart

Offenbach-O, Remix basierend auf Daten von: Deutscher Wetterdienst unter CreativeCommons CC BY 4.0 [bot-beta]

#slitscan

#slitscan

Pemberton, CA BC, NAV CANADA, FAA WeatherCam data remix [bot-beta]

#slitscan

#slitscan

Trolltinden mot Andenes 69.241255,16.003142, Remix basierend auf Daten von: MET Norway unter CreativeCommons CC BY 4.0 [bot-beta]

#slitscan

#slitscan