LLM Inference Takes Aim at Production Realities

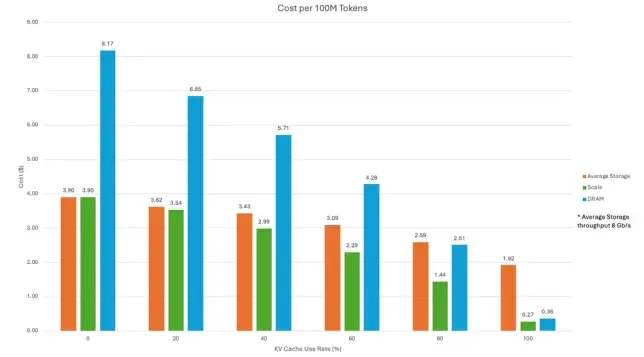

New disaggregated LLM serving is faster and cheaper than old aggregated methods for businesses using AI. Tests show better performance.

#LLMServing, #AIefficiency, #OracleCloud, #AMDMI300X, #TechNews

https://newsletter.tf/disaggregated-llm-serving-faster-than-aggregated/