Really missing #ESMARConf2023 where I could catch up at my leisure on Youtube....

Really missing #ESMARConf2023 where I could catch up at my leisure on Youtube....

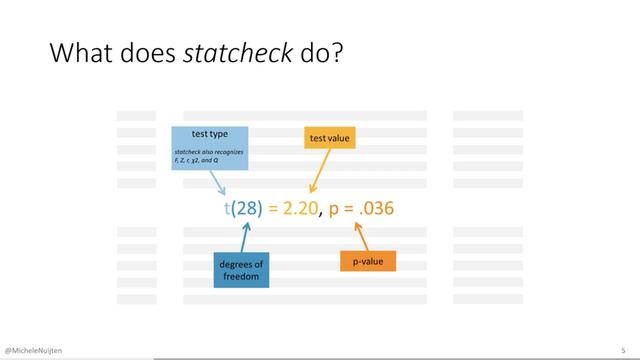

The {statcheck} #rstats 📦 is “a ‘spellchecker’ for statistics. It checks whether your p-values match their accompanying test statistic and degrees of freedom.”

statcheck searches text for null-hypothesis significance tests in APA style (e.g., t(28) = 2.2, p < .05), recalculates each p-value, and flags errors if reported and computed values don’t match.

By @MicheleNuijten

Repo: https://github.com/MicheleNuijten/statcheck

#ESMARConf2023 Video: https://youtu.be/XuFyqXw5ho0

@rstats #stats

Gavin Stewart in #ESMARConf2023 on rapid reviews: many times, the most time saving part in a rapid review is being smart about the research question

Along with an accompanying blog post that has some extra programming details: https://www.jepusto.com/cluster-bootstrap-selection-model/

ESMARConf2023: Presentations 5 - Quantitative synthesis 2 livestream

Principles and framework for assessing the risk of bias for studies included in comparative quantitative environmental systematic reviews - Environmental Evidence

The internal validity of conclusions about effectiveness or impact in systematic reviews, and of decisions based on them, depends on risk of bias assessments being conducted appropriately. However, a random sample of 50 recently-published articles claiming to be quantitative environmental systematic reviews found 64% did not include any risk of bias assessment, whilst nearly all that did omitted key sources of bias. Other limitations included lack of transparency, conflation of quality constructs, and incomplete application of risk of bias assessments to the data synthesis. This paper addresses deficiencies in risk of bias assessments by highlighting core principles that are required for risk of bias assessments to be fit-for-purpose, and presenting a framework based on these principles to guide review teams on conducting risk of bias assessments appropriately and consistently. The core principles require that risk of bias assessments be Focused, Extensive, Applied and Transparent (FEAT). These principles support risk of bias assessments, appraisal of risk of bias tools, and the development of new tools. The framework follows a Plan-Conduct-Apply-Report approach covering all stages of risk of bias assessment. The scope of this paper is comparative quantitative environmental systematic reviews which address PICO or PECO-type questions including, but not limited to, topic areas such as environmental management, conservation, ecosystem restoration, and analyses of environmental interventions, exposures, impacts and risks.

Impressionante como a #ESMARConf2023 tem algo para todo o mundo. Hoje mesmo teve uma oficina de seleção de artigos em revisão sistemática (mencionar ferramentas foi um detelhe no fim), uma de introdução ao GitHub, e em seguida vai ter outra de Git/GitHub avançado.

ESMARConf - Events

ESMARConf is a FREE, online annual conference series dedicated to evidence synthesis and meta-analysis in R. Our aim is to raise awareness of the utility of Open Source tools in R for conducting all aspects of evidence syntheses (systematic reviews/maps, meta-analysis, rapid reviews, scoping reviews, etc.), to build capacity for conducting rigorous evidence syntheses, to support the development of novel tools and frameworks for robust evience synthesis, and to support a community of practice working in evidence synthesis tool development. ESMARConf began in 2021 and is coordinated by the Evidence Synthesis Hackathon.

Jacqui Eales is running a workshop on screening studies for eligibility in evidence syntheses

ESMARConf2023: Workshop 6 - Screening studies for eligibility in evidence syntheses

Guido Schwarzer and and Gerta Rücker just demonstrated in #ESMARConf2023 their package {netmeta} for frequentist network #MetaAnalysis in #RStats

https://www.youtube.com/live/A4foA25UylY

The slides etc are here:

ESMARConf2023: Workshop 5 - Network meta-analysis using R package netmeta

Trouble dealing with meta-scientific replication data? There's still time to register for #ESMARConf2023 and catch our tutorial on MetaPipeX - a novel framework, R-package and shiny app to make multi-site replication data more accessible!

You don't want to miss it!

Most work is done by

@JFuenderich - @epizyklen and my fabulous personality show up mostly to spice up the tutorial!