Looking for inspiration on this Friday? Liu et al. have explored the potential of general purpose #LLMs for automated #refactoring.

Take a look at the #AutomatedSoftwareEngineering article at: https://link.springer.com/article/10.1007/s10515-025-00500-0

Exploring the potential of general purpose LLMs in automated software refactoring: an empirical study - Automated Software Engineering

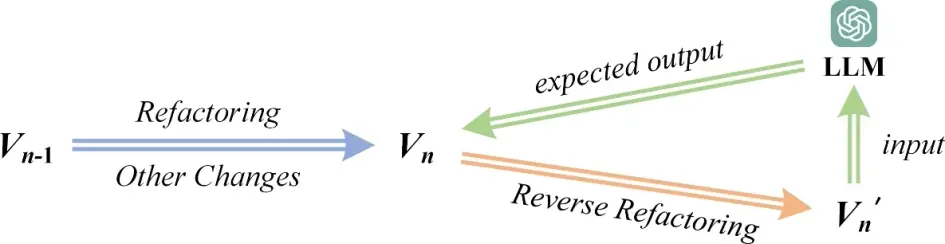

Software refactoring is an essential activity for improving the readability, maintainability, and reusability of software projects. To this end, a large number of automated or semi-automated approaches/tools have been proposed to locate poorly designed code, recommend refactoring solutions, and conduct specified refactorings. However, even equipped with such tools, it remains challenging for developers to decide where and what kind of refactorings should be applied. Recent advances in deep learning techniques, especially in large language models (LLMs), make it potentially feasible to automatically refactor source code with LLMs. However, it remains unclear how well LLMs perform compared to human experts in conducting refactorings automatically and accurately. To fill this gap, in this paper, we conduct an empirical study to investigate the potential of LLMs in automated software refactoring, focusing on the identification of refactoring opportunities and the recommendation of refactoring solutions. We first construct a high-quality refactoring dataset comprising 180 real-world refactorings from 20 projects, and conduct the empirical study on the dataset. With the to-be-refactored Java documents as input, ChatGPT and Gemini identified only 28 and 7 respectively out of the 180 refactoring opportunities. The evaluation results suggested that the performance of LLMs in identifying refactoring opportunities is generally low and remains an open problem. However, explaining the expected refactoring subcategories and narrowing the search space in the prompts substantially increased the success rate of ChatGPT from 15.6 to 86.7%. Concerning the recommendation of refactoring solutions, ChatGPT recommended 176 refactoring solutions for the 180 refactorings, and 63.6% of the recommended solutions were comparable to (even better than) those constructed by human experts. However, 13 out of the 176 solutions suggested by ChatGPT and 9 out of the 137 solutions suggested by Gemini were unsafe in that they either changed the functionality of the source code or introduced syntax errors, which indicate the risk of LLM-based refactoring.

Will you be our valentine?

Our hearts will certainly flutter when we see your submissions to #AutomatedSoftwareEngineering journal!

Submit your research today at https://link.springer.com/journal/10515!

Interested in the inclusion of AI-based tools in IDEs? Consider submitting to our upcoming #AutomatedSoftwareEngineering special issue!

Learn all about it at https://ause-journal.github.io/25aiide.html.

Happy New Year!

Looking for inspiration? Brownlee et al. have examined the use of LLMs to mutate program code during Genetic Improvement - a process where programs evolve to improve their quality/performance while ensuring they still function correctly.

Check out the #AutomatedSoftwareEngineering article at https://link.springer.com/article/10.1007/s10515-024-00473-6.

Large language model based mutations in genetic improvement - Automated Software Engineering

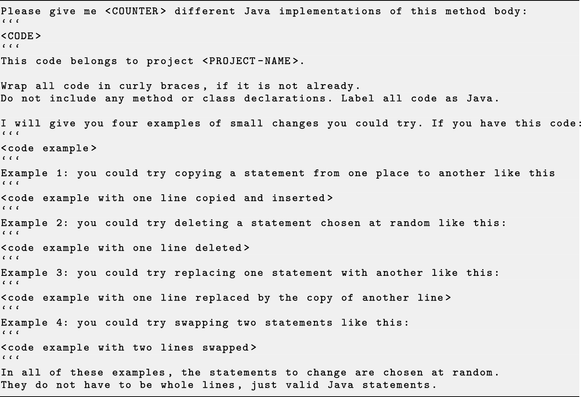

Ever since the first large language models (LLMs) have become available, both academics and practitioners have used them to aid software engineering tasks. However, little research as yet has been done in combining search-based software engineering (SBSE) and LLMs. In this paper, we evaluate the use of LLMs as mutation operators for genetic improvement (GI), an SBSE approach, to improve the GI search process. In a preliminary work, we explored the feasibility of combining the Gin Java GI toolkit with OpenAI LLMs in order to generate an edit for the JCodec tool. Here we extend this investigation involving three LLMs and three types of prompt, and five real-world software projects. We sample the edits at random, as well as using local search. We also conducted a qualitative analysis to understand why LLM-generated code edits break as part of our evaluation. Our results show that, compared with conventional statement GI edits, LLMs produce fewer unique edits, but these compile and pass tests more often, with the OpenAI model finding test-passing edits 77% of the time. The OpenAI and Mistral LLMs are roughly equal in finding the best run-time improvements. Simpler prompts are more successful than those providing more context and examples. The qualitative analysis reveals a wide variety of areas where LLMs typically fail to produce valid edits commonly including inconsistent formatting, generating non-Java syntax, or refusing to provide a solution.

Machine-readable specification and intelligent cloud-based execution of logical test cases for automated driving functions - Automated Software Engineering

The scenario-based verification and validation of highly automated driving functions requires extensive testing using a mix of interconnected test methods with varying degrees of virtualization, ranging from faster-than-real-time scenario exploration in software-in-the-loop simulations to in-vehicle testing. The efficiency of scenario-based test procedures is continuously improving, especially in the simulation domain with the introduction of parallel execution in the cloud, parameter variation algorithms and established standards for driving scenario specification. In contrast, current test case specifications are very tool- and project-specific and often not machine-readable, hindering the exchange, reuse and automation of scenario-based tests across all test platforms. This paper presents a novel machine-readable test specification format for scenario-based testing implemented as an XML schema. Its data structure incorporates logical or concrete scenarios within preconditions, inputs and pass criteria for automated driving functions following established test standards such as ISO/IEC/IEEE 29119. The format enables a tool-agnostic specification, reuse and exchange of scenario-based tests for simulation-based and in-vehicle testing. A cloud-simulation workflow has been developed that exploits the automation potential offered by the format. By means of testing a highway ramp-on function, a logical test case is generated and automatically imported into the simulator. For efficiently exploring the parameter space of the logical test case, a novel parameter variation method is applied. The combination of a dedicated test case format, intelligent scenario exploration methods and a state-of-the-art cloud simulation platform results in a highly efficient scenario-based test procedure.

Do you work on intelligent program analysis and testing tools for modern, complex systems?

Consider submitting to our upcoming #AutomatedSoftwareEngineering special issue! Submissions due January 31st.

Read all about it here: https://ause-journal.github.io/25programanalysis.html and submit here: https://link.springer.com/journal/10515

Multi-objective improvement of Android applications - Automated Software Engineering

Non-functional properties, such as runtime or memory use, are important to mobile app users and developers, as they affect user experience. We propose a practical approach and the first open-source tool, GIDroid for multi-objective automated improvement of Android apps. In particular, we use Genetic Improvement, a search-based technique that navigates the space of software variants to find improved software. We use a simulation-based testing framework to greatly improve the speed of search. GIDroid contains three state-of-the-art multi-objective algorithms, and two new mutation operators, which cache the results of method calls. Genetic Improvement relies on testing to validate patches. Previous work showed that tests in open-source Android applications are scarce. We thus wrote tests for 21 versions of 7 Android apps, creating a new benchmark for performance improvements. We used GIDroid to improve versions of mobile apps where developers had previously found improvements to runtime, memory, and bandwidth use. Our technique automatically re-discovers 64% of existing improvements. We then applied our approach to current versions of software in which there were no known improvements. We were able to improve execution time by up to 35%, and memory use by up to 33% in these apps.