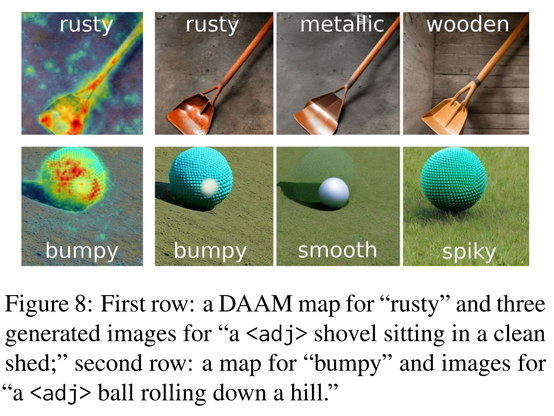

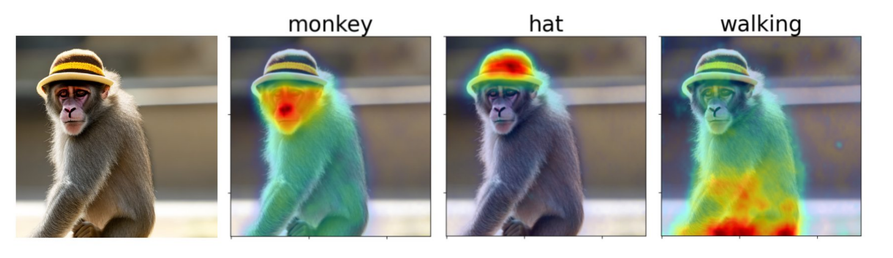

What the DAAM: with our attribution maps, we uncover entanglement in Stable Diffusion. Cohyponyms ,such as "zebra" and "giraffe," worsen generation when together, and adjectives attend beyond the nouns they modify.

Tweet: https://twitter.com/ralph_tang/status/1600912260540817409

Paper: https://arxiv.org/abs/2210.04885

Demo: https://huggingface.co/spaces/tetrisd/Diffusion-Attentive-Attribution-Maps

Codebase: https://github.com/castorini/daam

Raphael Tang on Twitter

“What the DAAM! With our attribution maps, we uncover entanglement in Stable Diffusion. Cohyponyms such as "zebra" and "giraffe" worsen generation when together, and adjectives attend beyond the nouns they modify. Read on for the full story! (1/12) Paper: https://t.co/usApj14H8Y”