Beth Carey

@tomorrowsAI

- 49 Followers

- 9 Following

- 204 Posts

co-founder of machine intelligence company Pat Inc - creators of machine readable meaning for the world's languages

@garymarcus I asked the question if others, like Steven Marlow, found 'that other platform' lonelier now for AI writers since Gary left ......

' Implicit in today’s platforms is the requirement for ongoing training, on top of the initial training. ..... the cost of training is expensive. 1 of the tech giants we met mentioned that they can spend $m's to create a corpus to use in machine learning! https://buff.ly/3kEiJEj

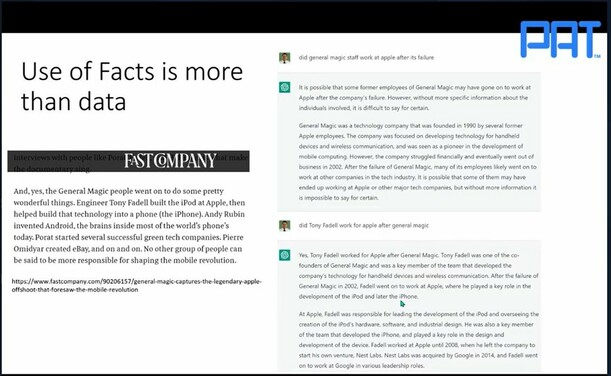

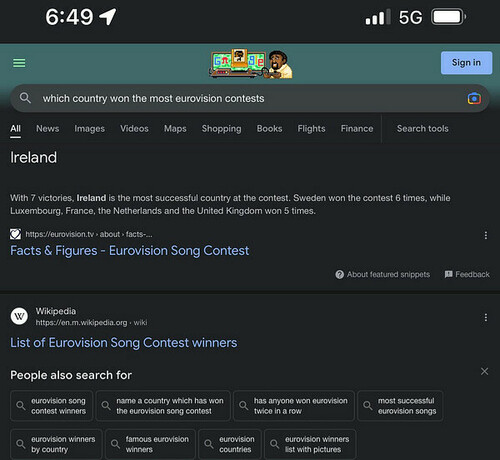

*Even if* the knowledge/facts are in the model, doesn't mean it can be retrieved/reasoned over. Super clear example from @[email protected]

Realistically, they are "doing amazing tasks that do not require an understanding of the real world and are consequently utterly incapable of determining whether the statistical patterns they discover are meaningful or coincidental" https://buff.ly/3G95faL

Anthropomorphizing leads us to THINK LLMs are on the continuum to AGI, but are they? "Large Language Models (LLMs) like GPT-3 do not use calculators, attempt any kind of logical reasoning, or try to distinguish between fact and falsehood" https://buff.ly/3G95faL

Dileep George, DeepMind Researcher......worried that scaling alone would not be enough to bring us to general intelligence, raising an analogy with dirigibles like the Hindenberg that at one point seemed to be outpacing airplane development https://buff.ly/3Z3iodV

As explained by @[email protected] - even though the increasingly larger LLM text sequencers look like a path to the holy grail, as per @[email protected] 's paper of the 4 #AI fallacies, it's not (necessarily) a continuum. The missing piece of understanding isn't a bolt on.

"I, long for the day when search engines can reliably spit back text plus genuine, appropriate references....But until those bits all fit together in a way we can trust, I prefer to borrow a memorable phrase from Ariana Grande: Thank U, Next" https://buff.ly/3YUrv0N

“Humans actively maintain imperfect but reliable world models. LLMs don’t and that has consequences” Marcus said. “They can’t be updated incrementally by giving them new facts. They need to be typically retrained to incorporate new knowledge” https://buff.ly/3FYidbo