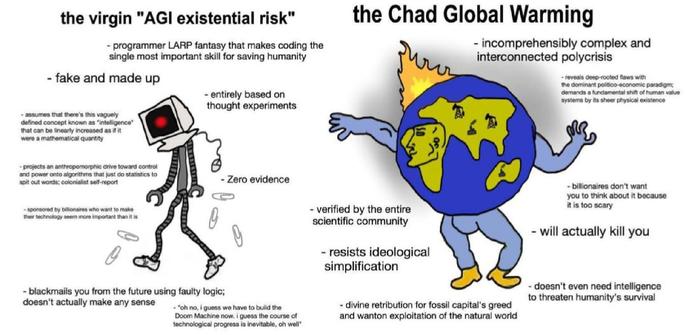

When an agent fails, the first question is almost always about the model. Did it reason correctly? Was the prompt well constructed? Sometimes those are the right questions. But more often the better question is: what kind of world it was the agent inside of?

https://thejaymo.net/2026/03/19/ontological-hardness/?utm_source=mastodon&utm_medium=jetpack_social