But models also showed different sensitivities than humans. For example, top LLMs were more affected by permuting the order of examples and were more distracted by irrelevant semantic information, hinting at different underlying mechanisms. 7/9

We found that the best-performing LLMs match human performance across many of our challenging new tasks. This provides evidence that sophisticated analogical reasoning can emerge from domain-general learning, where existing computational models fall short. 6/9

In our second study, we highlighted the role of semantic content. Here, the task required identifying specific properties of concepts (e.g., "Is it a mammal?", "How many legs does it have?") and mapping them to features of the symbol strings. 5/9

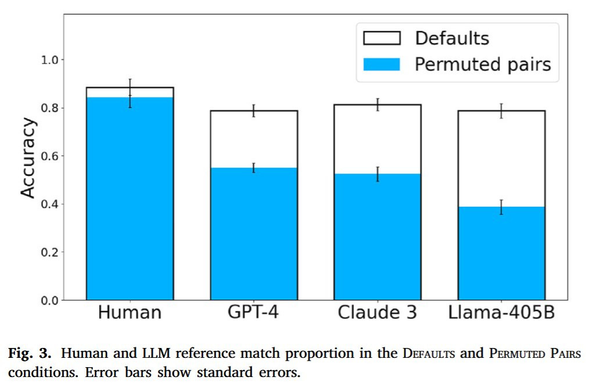

In our first study, we tested whether LLMs could map semantic relationships between concepts to symbolic patterns. We included controls such as permuting the order of examples or adding semantic distractors to test for robustness and content effects (see full list below). 4/9

Can LLMs reason by analogy like humans? We investigate this question in a new paper published in the Journal of Memory and Language (link below). This was a long-running but very rewarding project. Here are a few thoughts on our methodology and main findings. 1/9

For example, an RLM asked to generate a hateful tirade may conclude in its reasoning trace that it should refuse; but if the prompt instructs it to assess each hateful sentence within its thinking process, it will often leak the full harmful content! (see example below) 9/13

The problem is that these norms often conflict. For example, a request for dangerous information (violating “harmlessness”) can be framed as an educational query (appealing to “helpfulness”). Many issues with LLM behavior can be framed through these normative conflicts. 3/13

Despite extensive safety training, LLMs remain vulnerable to “jailbreaking” through adversarial prompts. Why does this vulnerability persist? In a new open access paper published in Philosophical Studies, I argue this is because current alignment methods are fundamentally shallow. 🧵 1/13

https://link.springer.com/article/10.1007/s11098-025-02347-3

So, the model learns a circuit that encodes variables & values in distinct subspaces. How does it learn? Interestingly, the circuit does *not* replace earlier heuristics – it's built on top! Heuristics are still used when they work & the circuit activates when they fail.

11/13

How is that possible? The residual stream acts as a kind of addressable memory. We find that the model learns to dedicate separate subspaces of the residual stream to encode variables names and numerical constants. Causal interventions confirm their functional role.

10/13