| Pronouns | she/her |

| Website | https://anna-ivanova.net/ |

| Google Scholar | https://scholar.google.com/citations?user=hBUjCB0AAAAJ&hl=en |

Anna Ivanova

- 436 Followers

- 179 Following

- 37 Posts

Anna Ivanova: Language Understanding Goes Beyond Language Processing

It’s been fun working with a brilliant team of coauthors - @kmahowald @ev_fedorenko @ibandlank @NancyKanwisher & Josh Tenenbaum

We’ve done a lot of work refining our views and revising our arguments every time a new big model came out. In the end, we still think a cogsci perspective is valuable - and hope you do too :) 10/10

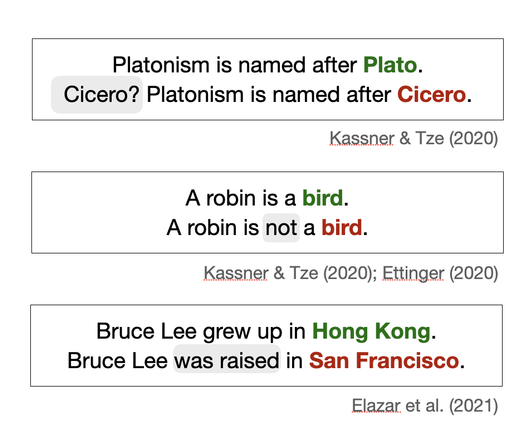

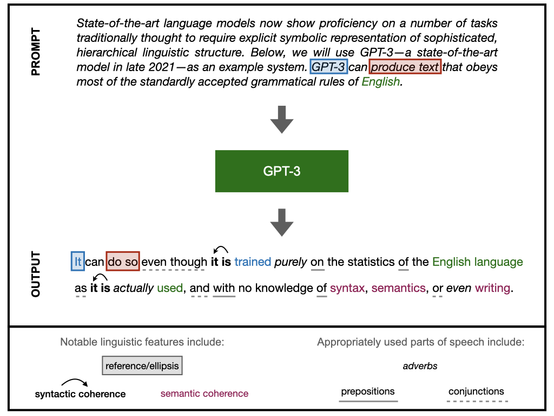

The formal/functional distinction is important for clarifying much of the current discourse around LLMs. Too often, people mistake coherent text generation for thought or even sentience. We call this a “good at language = good at thought” fallacy. 8/

Google's powerful AI spotlights a human cognitive glitch: Mistaking fluent speech for fluent thought

Fluent expression is not always evidence of a mind at work, but the human brain is primed to believe so. A pair of cognitive linguistics experts explain why language is not a good test of sentience.

We argue that the word-in-context prediction objective is not enough to master human thought (even though it’s surprisingly effective for learning much about language!).

Instead, like human brains, models that strive to master both formal & functional language competence will benefit from modular components - either built-in explicitly or emerging through a careful combo of data+training objectives+architecture. 6/