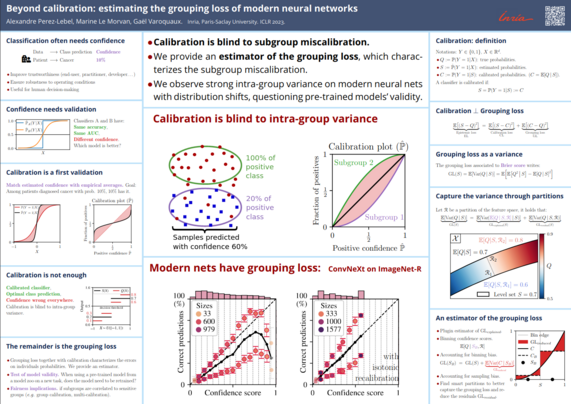

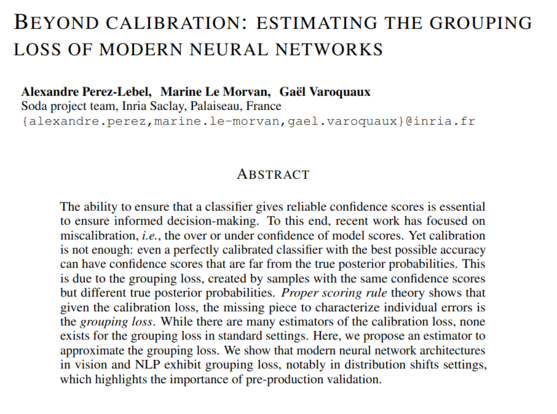

Our poster (#64) this afternoon at #ICLR2023: why calibration is not enough, and how to estimate the remainder to quantify uncertainty

Indeed, a classifier can be over-confident on some individuals and under-confident on others. How to separate this error from irreducible uncertainty?

The challenge is that individual probabilities P(y|X) are never observed, only discrete labels. Thus we resort to proper scoring rule decompositions, and estimate bounds on terms.

Paper: https://openreview.net/forum?id=6w1k-IixnL8¬eId=1w0IdroBaT