"Practical Formal Verification for MLIR Programs"

https://twitter.com/matt_dz

"Practical Formal Verification for MLIR Programs"

Really happy to see stuff happening in e-graphs. From a university in Switzerland nontheless!

Versioned E-Graphs

https://programming-group.com/assets/pdf/papers/2026_Versioned-EGraphs.pdf

from PLDI26

Gluon and Linear Layouts Deep-Dive

Tile-Based GPU Programming with Low-Level Control

Video: https://www.youtube.com/watch?v=oYs_qtuk2Pg

Slides: https://github.com/gpu-mode/lectures/tree/main/lecture_104

GPU MODE Lecture 104; April 22, 2026

Keren Zhou, Peter Bell, Mario Lezcano

Hosted by Mark Saroufim

Topics:

- Gluon: https://triton-lang.org/main/gluon/ - optimized Blackwell Matmul, tutorials & examples

- Proton: Towards Multi-level, Adaptive Profiling for Triton: https://doi.org/10.1109/CGO68049.2026.11395207

- Sanitizers

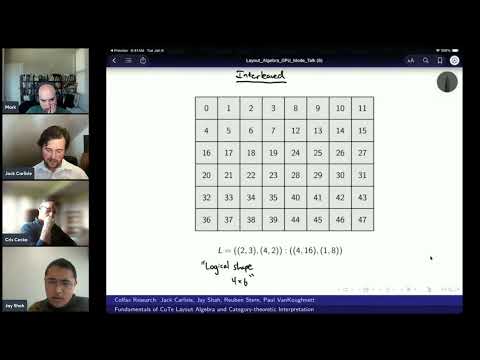

- Linear Layout Visualizer: https://deep-learning-profiling-tools.github.io/linear-layout-viz/

https://yangzhixuan.github.io/NbE.html

My (rather long) blog post on normalisation by evaluation is online now. In this post I show how we can optimise a naive substitution-based applicative-order normaliser of untyped calculus step by step, leading us to reconstruct NbE as "weak-applicative-order normalisation with meta-level laziness, lazy substitution, and de Bruijn levels". We will also examine some "adversarial exploit" that makes NbE slow and use the insights gained from our reconstruction of NbE to optimise NbE.

Dominance is a fundamental concept in compilers based on static single assignment (SSA) form. It underpins a wide range of analyses and transformations and defines a core property of SSA: every use must be dominated by its definition. We argue that this reliance on dominance has become increasingly problematic -- both in terms of precision and applicability to modern higher-order languages. First, control flow overapproximates data flow, which makes dominance-based analyses inherently imprecise. Second, dominance is well-defined only for first-order control-flow graphs (CFGs). More critically, higher-order programs violate the assumptions underlying SSA and classic CFGs: without an explicit CFG, the very notion that all uses of a variable must be dominated by its definition loses meaning. We propose an alternative foundation based on free variables. In this view, $ϕ$-functions and function parameters directly express data dependencies, enabling analyses traditionally built on dominance while improving precision and naturally extending to higher-order programs. We further present an efficient technique for maintaining free-variable sets in a mutable intermediate representation (IR). For analyses requiring additional structure, we introduce the nesting tree -- a relaxed analogue of the dominator tree constructed from variable dependencies rather than control flow. Our benchmarks demonstrate that the algorithms and data structures presented in this paper scale log-linearly with program size in practice.

We report on using an agentic coding assistant (Claude Code, powered by Claude Opus 4.6) to mechanize a substantial Rocq correctness proof from scratch, with human guidance but without human proof writing. The proof establishes semantic preservation for the administrative normal form (ANF) transformation in the CertiCoq verified compiler for Rocq. The closely related continuation-passing style (CPS) transformation in CertiCoq was previously proved correct by human experts over several months. We use this proof as a template and instruct the LLM to adapt the proof technique to the ANF setting, which differs in important technical ways. The resulting ANF proof comprises approximately 7,800 lines of Rocq (larger than the 5,300-line CPS proof) and was developed in approximately 96 hours. We describe the proof technique and report on the experience of developing it with an LLM, discussing both the strengths and limitations of the approach and its implications for verified compiler construction.