RT @osanseviero

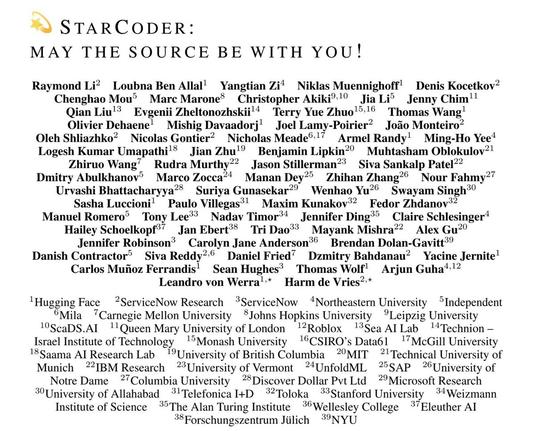

💫StarCoder: May the Source be with You!

🔥15B LLM with 8k context

🥳Trained on permissively-licensed code

💻Acts as tech assistant

🤯80+ programming languages

🚀Open source and data

💫Online demos

🧑💻VSCode plugin

🪅1 trillion tokens

Follow this amazing experience! 🧵