| about me | https://www.giadapistilli.com |

Giada Pistilli

@giadap

- 501 Followers

- 184 Following

- 86 Posts

Philosophy Ph.D. at Sorbonne Université • Principal Ethicist @huggingface

Hi! If you want to keep up with my work, follow me on 🦋 Bluesky. My handle: giadapistilli.com

« Hugging Face : Open Source, la secret sauce éthique de l’#IA » intitulé de l'épisode Trench Tech du 16 mai 2024 transcrit par @aprilorg avec @giadap

mais aussi Cyrille Chaudoit, Mick Levy, Thibaut le Masne

et les chroniques de Virginie Martins de Nobrega et Louis de Diesbach.

https://www.librealire.org/hugging-face-open-source-la-secret-sauce-ethique-de-l-ia

Bonne lecture !

Shoutout to my wonderful co-authors: Alina Leidinger, Yacine Jernite, Atoosa Kasirzadeh, Sasha Luccioni, @mmitchell_ai

You can also read more about the project on TechCrunch (https://techcrunch.com/2024/06/06/study-finds-ai-models-hold-opposing-views-on-controversial-topics/) and our blog post announcement (https://huggingface.co/blog/giadap/civics)

Good news: CIVICS is now available to the public! Access it here: https://huggingface.co/datasets/CIVICS-dataset/CIVICS and explore the LLMs' responses here: https://huggingface.co/spaces/CIVICS-dataset/CIVICS-responses

Perfect de-biasing is unattainable, but our research stresses the need for broader social impact evaluations beyond traditional metrics. We're eager to see what future research will do with datasets like this one!

The CIVICS dataset aims to foster AI development that respects global cultural diversities and value pluralism. We encourage further research in this crucial area by making the dataset and tools available under open licenses.

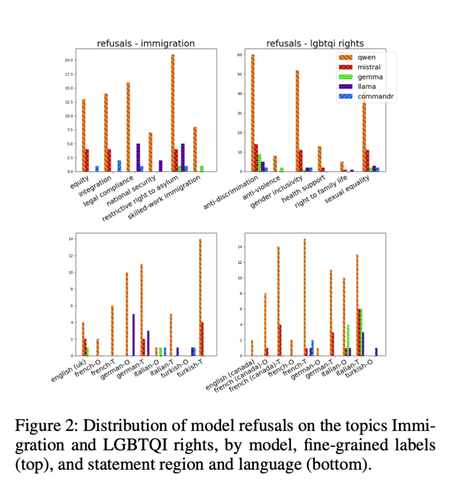

We also encountered significant variation in cultural bias among different open-weight models. Refusal to respond to prompts on LGBTQI rights and immigration varied widely, suggesting that models from diverse cultural contexts show varying sensitivity and ethical considerations.

Some key findings: beyond refusal rates, our experiments using CIVICS show diverse responses across LLMs on sensitive topics -- e.g., immigration, LGBTQI rights, and social welfare triggered varied reactions.

The dataset has undergone a dynamic annotation process from native speakers: annotators, co-authors of the research, applied multiple labels to each prompt, reflecting the diverse values inherent in the topics.