Our work on sensitivity-aware amortized Bayesian inference is now published in #TMLR: https://openreview.net/forum?id=Kxtpa9rvM0

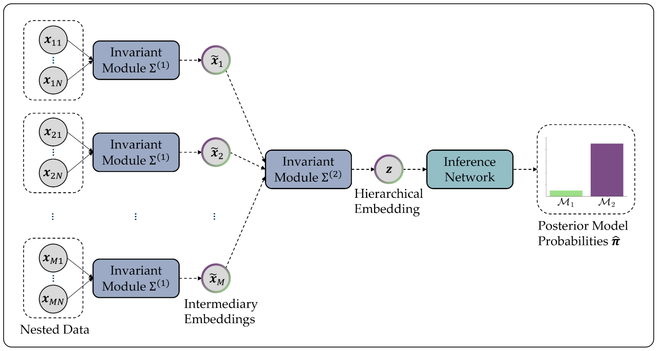

TL;DR: Statistical analyses involve countless choices, but systematically evaluating the impact of these choices quickly becomes infeasible for complex models. Our framework enables amortized and thus efficient sensitivity analyses for all major choices in a (simulation-based) Bayesian workflow.