If you are into hippocampus, PFC, cognitive maps etc. you’d have encountered Successor Representations (SR). But often SR is misunderstood, and ascribed properties it doesn’t have. In this blog I describe some of the limitations of SR as a model of cognitive maps.

https://blog.dileeplearning.com/p/a-critique-of-successor-representations

A critique of successor representations as a model of learning in the hippocampus

Successor representations (SR) is a popular, influential, and often cited model of place cells in the hippocampus.

If civil engineering was like AI ...

#AGIComicsPlanning to participate in the ARC price to win a million bucks? Read this blog first!

https://blog.dileeplearning.com/p/how-to-get-a-million-bucks-quick

How to get a million bucks: quick thoughts on cognitive programs and the ARC challenge.

The abstract reasoning corpus (ARC) challenge by Francois Chollet has gained renewed attention due to the 1M prize announcement. This challenge is interesting to me because the idea of “abstraction” as “synthesizing cognitive programs” is something my team has worked on and

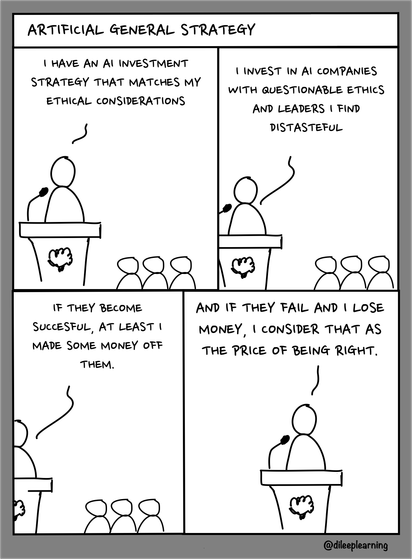

#AGIComics has finally figured out its investment strategy...

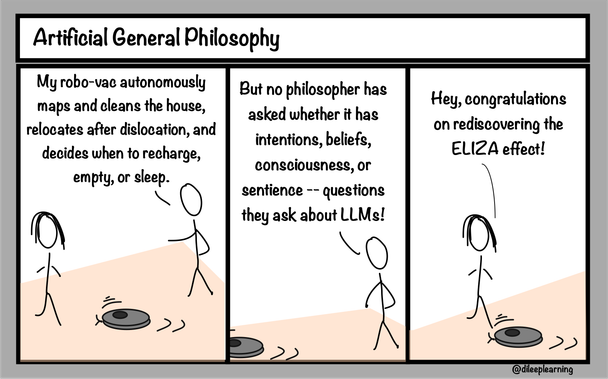

#AGIComics discovers why philosophers leave a vacuum when it comes to consciousness...

9️⃣ /Final.

The paper has lots of other fun experiments too. Check it out here:

https://arxiv.org/abs/2302.07350

Graph schemas as abstractions for transfer learning, inference, and planning

Transferring latent structure from one environment or problem to another is a mechanism by which humans and animals generalize with very little data. Inspired by cognitive and neurobiological insights, we propose graph schemas as a mechanism of abstraction for transfer learning. Graph schemas start with latent graph learning where perceptually aliased observations are disambiguated in the latent space using contextual information. Latent graph learning is also emerging as a new computational model of the hippocampus to explain map learning and transitive inference. Our insight is that a latent graph can be treated as a flexible template -- a schema -- that models concepts and behaviors, with slots that bind groups of latent nodes to the specific observations or groundings. By treating learned latent graphs (schemas) as prior knowledge, new environments can be quickly learned as compositions of schemas and their newly learned bindings. We evaluate graph schemas on two previously published challenging tasks: the memory & planning game and one-shot StreetLearn, which are designed to test rapid task solving in novel environments. Graph schemas can be learned in far fewer episodes than previous baselines, and can model and plan in a few steps in novel variations of these tasks. We also demonstrate learning, matching, and reusing graph schemas in more challenging 2D and 3D environments with extensive perceptual aliasing and size variations, and show how different schemas can be composed to model larger and more complex environments. To summarize, our main contribution is a unified system, inspired and grounded in cognitive science, that facilitates rapid transfer learning of new environments using schemas via map-induction and composition that handles perceptual aliasing.

8️⃣ Schemas don't have to match perfectly. We show this using a fun experiment of MNIST digits...imagine an agent living inside the digit..No two examples from the same digit are identical. Can the agent recognize new instances of a digit by transferring the schema from another? The answer is yes!

7️⃣ Notice the difference in speed of learning when composing with schemas vs without.

6️⃣ The agent can also compose known schemas to learn a large environment quickly. In this case, the visual observations are different AND the environment needs to be expressed as a composition.

5️⃣ With this, in new environments, you can drive behavior without even seeing most of the environment -- for example, you can plan shortcuts through unexperienced portions of the environment.