| Homepage | http://dice-research.org |

| Age | 42 |

DICE

- 23 Followers

- 8 Following

- 103 Posts

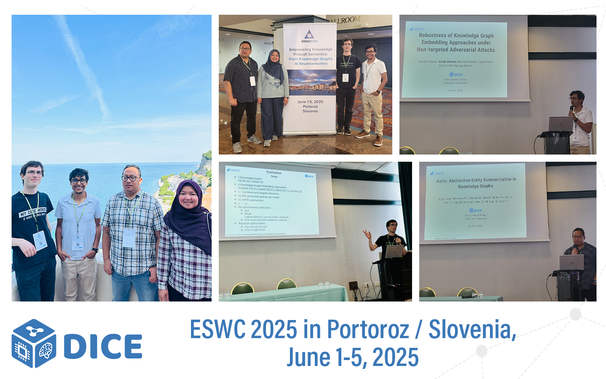

🎉 Our group presented 3 papers at the @eswc_conf in Portoroz/Slovenia🇸🇮!

1️⃣ "Robustness Evaluation of Knowledge Graph Embedding Models Under Non-targeted Attacks"

🔗https://link.springer.com/chapter/10.1007/978-3-031-94575-5_15

2️⃣ "Evaluating Approximate Nearest Neighbour Search Systems on Knowledge Graph Embeddings"

🔗 https://link.springer.com/chapter/10.1007/978-3-031-94575-5_4

3️⃣ "ANTS: Abstractive Entity Summarization in Knowledge Graphs"

🔗 https://link.springer.com/chapter/10.1007/978-3-031-94575-5_8

Thanks to the ESWC community and the organising team for a great event!🙌😊

#PhDDefense #AI #NeuroSymbolicAI

➡️All information about the position and how to apply can be found here: https://uni-paderborn.de/fileadmin/zv/4-4/stellenangebote/Kennziffer6930.pdf

➡️You can find all the details here: https://www.uni-paderborn.de/fileadmin/zv/4-4/stellenangebote/Kennziffer6872_-_Englisch.pdf

The other DICE contribution at #COLING2025 comes from Nikit, who presented "LOLA - An Open-Source Massively Multilingual Large Language Mode" by Nikit Srivastava, Denis Kuchelev, Tatiana Moteu Ngoli, Kshitij Shetty, Michael Röder, @hamadazahera, Diego Moussallem & Axel Ngonga.🤩 👏

👉 Want to find out more? Find the paper here: https://arxiv.org/abs/2409.11272

LOLA -- An Open-Source Massively Multilingual Large Language Model

This paper presents LOLA, a massively multilingual large language model trained on more than 160 languages using a sparse Mixture-of-Experts Transformer architecture. Our architectural and implementation choices address the challenge of harnessing linguistic diversity while maintaining efficiency and avoiding the common pitfalls of multilinguality. Our analysis of the evaluation results shows competitive performance in natural language generation and understanding tasks. Additionally, we demonstrate how the learned expert-routing mechanism exploits implicit phylogenetic linguistic patterns to potentially alleviate the curse of multilinguality. We provide an in-depth look at the training process, an analysis of the datasets, and a balanced exploration of the model's strengths and limitations. As an open-source model, LOLA promotes reproducibility and serves as a robust foundation for future research. Our findings enable the development of compute-efficient multilingual models with strong, scalable performance across languages.

First conference in 2025!🤩 Many greetings to Daniel, who presented ‘Contextual Augmentation for Entity Linking using Large Language Models’ by Daniel Vollmers, @hamadazahera, Diego Moussallem and Axel Ngonga at #COLING2025 in Abu Dhabi🇦🇪 this week.👏👨💻 #DICEontour

👀 Would you like to find out more about the paper? Take a look here: https://papers.dice-research.org/2025/COLING_EL_Augmentation/public.pdf