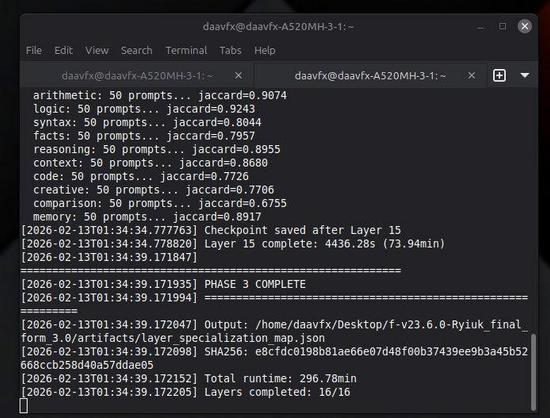

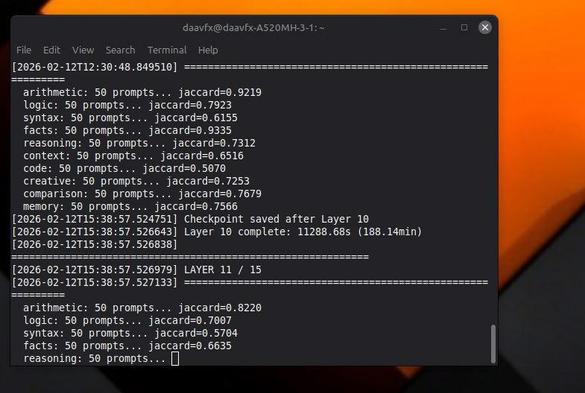

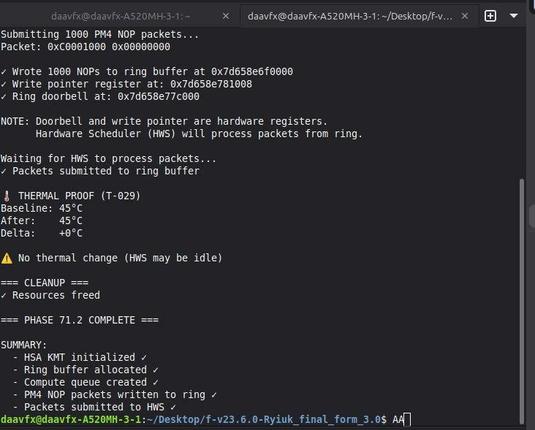

It took +2 days in 3 stages thanks to my pc rebooting... honestly I was expecting the architecture to have redundancy but this just proves: LLM's are one of the biggest achievements in human history and we actually build them properly (im building, just better).

Critical Discoveries

1. Layer 0 is THE GATE (99.87% divergence)

Removing Layer 0 = Total model failure

Cannot process ANY input

Absolutely irreplaceable

2. Early Layers are Essential (95%+ divergence)