| Blog | https://crude2refined.wordpress.com/ |

| GitHub | https://github.com/myazdani |

| @crude2refined |

Mehrdad Yazdani

- 85 Followers

- 48 Following

- 355 Posts

OLMoE: Open Mixture-of-Experts Language Models

We introduce OLMoE, a fully open, state-of-the-art language model leveraging sparse Mixture-of-Experts (MoE). OLMoE-1B-7B has 7 billion (B) parameters but uses only 1B per input token. We pretrain it on 5 trillion tokens and further adapt it to create OLMoE-1B-7B-Instruct. Our models outperform all available models with similar active parameters, even surpassing larger ones like Llama2-13B-Chat and DeepSeekMoE-16B. We present various experiments on MoE training, analyze routing in our model showing high specialization, and open-source all aspects of our work: model weights, training data, code, and logs.

Neat free tool to generate directory tree structures in asciii: https://ascii-tree-generator.com/

Pretty useful when trying to figure out the structure for a project and brainstorming. Curious how the ascii renders here:

```

my_project/

├─ models/

│ ├─ model_1.py

│ ├─ model_2.py

├─ data/

│ ├─ data_prep.py

│ ├─ meta_data.json

├─ results/

├─ checkpoints/

trainer.py

runner.py

```

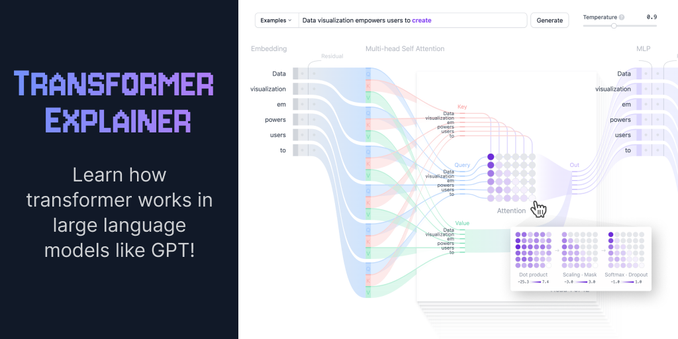

Pretty neat transformer visualization project. Wonder if this could help with debugging 😜