P.S.: I will be attending HCOMP '23 and later the MP2 Workshop at NeurIPS! If you're also going, I'd be happy to catch up in-person!

(n+1/n)

I'm currently looking for industry and academia positions. Please reach out if you're hiring! ❤️

https://homes.cs.washington.edu/~cqz/(n/n)

Quan Ze Chen - University of Washington

Personal Profile for Quanze Chen

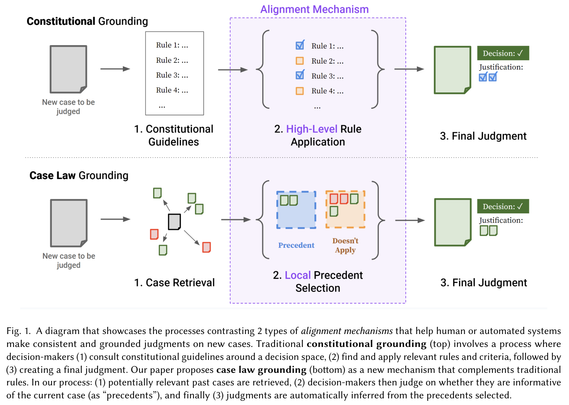

We follow this work up with Case Law for AI policy, where we present a democratic process for constructing a policy for AI based on case law, that aligns behaviors to community preferences.

https://social.cs.washington.edu/case-law-ai-policy/ (Website WIP)

(5/n)

Case Law for AI Policy - Project Website

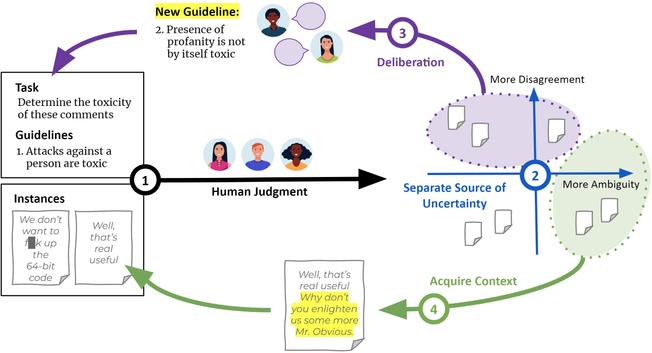

**Defining Socially-Constructed Concepts for AI Alignment**: In Case Law Grounding, we introduce a set of parallel human and AI (via prompting) workflows that uses cases as a medium to define preferences around nuanced socially-constructed concepts.

arxiv.org/abs/2310.07019

(4/n)

In the follow up, Judgment Sieve, we build upon these measurements to show that effective reduction of uncertainty should be targeted to the source.

arxiv.org/abs/2305.01615

(3/n)

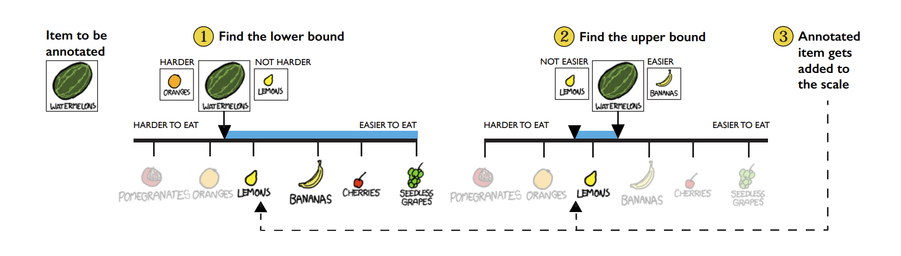

**Capturing and reducing uncertainty in human judgments**: In Goldilocks, we introduce a novel annotation tool that uses ranges and anchors to capture ambiguity and disagreement separately in scalar rating tasks.

arxiv.org/abs/2108.01799

(2/n)

Hello Fediverse! 🐘

Excited to announce I am going on the job market this cycle! (industry & academia)

I work on building uncertainty-aware tools and workflows that support capturing and defining socially-constructed concepts at scale.

Here are some examples of my work:

(1/n)

@socialfutureslab Next

@cqz gave a talk on Wed on targeted interventions to reduce uncertainty in judgments. Paper here:

https://dl.acm.org/doi/10.1145/3610074 He also discussed how it fits into his broader research trajectory and agenda, as he's heading on to the job market this year!

Judgment Sieve: Reducing Uncertainty in Group Judgments through Interventions Targeting Ambiguity versus Disagreement | Proceedings of the ACM on Human-Computer Interaction

When groups of people are tasked with making a judgment, the issue of uncertainty

often arises. Existing methods to reduce uncertainty typically focus on iteratively

improving specificity in the overall task instruction. However, uncertainty can arise

...

Our lab has three paper talks! But I want to highlight this one because

@cqz is on the job market this year!! He works in crowdsourcing and human-AI systems. Make sure to check out his presentation on Wednesday.

https://hci.social/@cqz/110663137778424179

Attached: 1 image

When groups of people make collective judgments, it's not surprising to get uncertainty around the final answer.

Maybe the question is hard? Or perhaps people disagree?

But how do we approach this uncertainty to reach consensus?

Paper: http://arxiv.org/abs/2305.01615

#CSCW2023

(1/n)

New paper

#hcomp2023 led by an ugrad in my lab, Andre Ye and

@cqz! We look at how to represent uncertainty in 2D annotations for computer vision models. In this case, we focus on medical image segmentation, where uncertainty is important for experts to interpret.

https://arxiv.org/abs/2308.07528

Confidence Contours: Uncertainty-Aware Annotation for Medical Semantic Segmentation

Medical image segmentation modeling is a high-stakes task where understanding of uncertainty is crucial for addressing visual ambiguity. Prior work has developed segmentation models utilizing probabilistic or generative mechanisms to infer uncertainty from labels where annotators draw a singular boundary. However, as these annotations cannot represent an individual annotator's uncertainty, models trained on them produce uncertainty maps that are difficult to interpret. We propose a novel segmentation representation, Confidence Contours, which uses high- and low-confidence ``contours'' to capture uncertainty directly, and develop a novel annotation system for collecting contours. We conduct an evaluation on the Lung Image Dataset Consortium (LIDC) and a synthetic dataset. From an annotation study with 30 participants, results show that Confidence Contours provide high representative capacity without considerably higher annotator effort. We also find that general-purpose segmentation models can learn Confidence Contours at the same performance level as standard singular annotations. Finally, from interviews with 5 medical experts, we find that Confidence Contour maps are more interpretable than Bayesian maps due to representation of structural uncertainty.