One of the most puzzling questions in Vedic Sanskrit and Avestan is how word-final -ah turns into an -o.

Here's a new proposal that explains the super complex development of fricatives in Indo-Iranian, with a special focus on Avestan and Old Persian.

Also a cool fact: Ahura Mazda is written in Assyrian as as-sa-ra ma-za-áš.

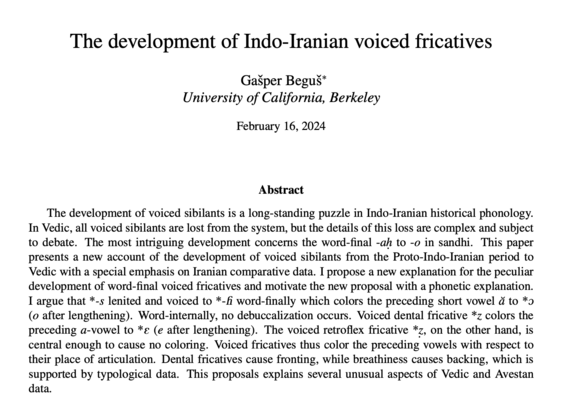

Paper (it's been great to cite works from the 19th century, 1882, 1888, 1889 ...):

The development of Indo-Iranian voiced fricatives - lingbuzz/007911

The development of voiced sibilants is a long-standing puzzle in Indo-Iranian historical phonology. In Vedic, all voiced sibilants are lost from the system, but the details of this loss are complex an - lingbuzz, the linguistics archive