Thomas Baekdal

- 1,057 Followers

- 41 Following

- 2.6K Posts

I just finished with the biggest project using Claude AI yet (I was rebuilding an internal systems)... It took place over 830 chat messages, and when I export the entire thing to PDF, the chat conversation is 293 pages long. Claude also created several documentation files + 9450 lines of code.

The chat session got so big that Firefox had trouble loading the page ;)

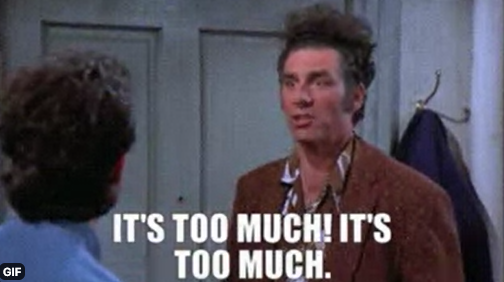

Reminder, there is no energy crisis. The price for electricity etc is completely fine. This is an oil/petrol crisis.

Data from Denmark where more than 70% of our electricity is being generated by renewables.

Seagate: DKK 5.025

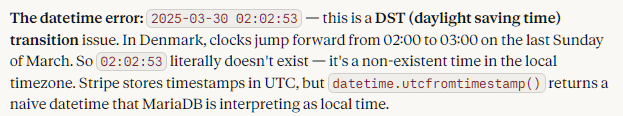

I had the weirdest problem. In my accounting system, my importer from Stripe kept failing on a single transaction, dated "2025-03-30 02:02:53" ... It looks fine, right?

Well, what if I tell you that this time doesn't exist in Denmark?

The problem here is that Stripe, being a US company, has a different daylight saving time than Denmark.

In the US DST was on 9 March 2025, while in Denmark it was on 30 March 2025, so while the date is correct in the USA, it doesn't exist in Denmark.

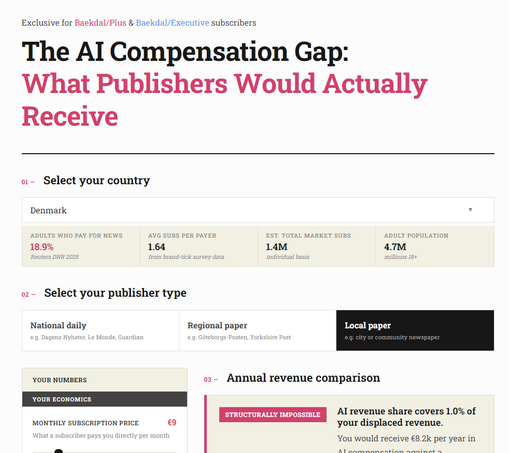

So... over the past few weeks, I have received several emails from people complaining about me using AI. Basically, they didn't think it was ethical considering how much damage this is causing publishers. So, I wrote an article about how I see this:

My view, as a media analyst, about using AI - https://baekdal.com/newsletter/my-view-as-a-media-analyst-about-using-ai/

I just published my latest newsletter where I test whether Cloudflare's new web scraper is protecting publishers as they promised us ... and it doesn't: https://baekdal.com/newsletter/cloudflare-just-betrayed-every-single-publisher/

It is not stopped by Cloudflare AI Crawl Control, you cannot even set it. It's not blocked by Cloudflare's managed robots.txt file. It's not identified as a verified bot, allowing you to block it via your managed bot rules ... you can block it manually by setting up custom rules, but not automatically: