Monoid Mary

- 563 Followers

- 170 Following

- 471 Posts

the quote in the first tweet is from this Verge piece, which i am not taking issue with, just that people should have realized this fundamental problem with pursuing AI via LLMs long ago

"Cutting edge research shows language is not the same as intelligence." Oh? You don't say? I could have told them this a long time ago!

https://ctrlshifted.substack.com/p/vignette-corpus-linguistics-1999

However, recent advances in both AI and proof formalization have begun to vastly accelerate and automate the first two components of this process. This is leading to a new type of "impedance mismatch": problems for which solutions can be rapidly generated and verified in a mostly automated process, but for which no human author has understood the arguments well enough to initiate the (much slower) digestion process.

In fact, with the current cultural incentives that reward the first authors to "solve" the problem, rather than the later authors who "digest" the solution, one may end up with the perverse situation in which an AI-generated (and formally verified) solution to an problem that is presented to the community without any significant digestion may actually *inhibit* the progress of the field that the problem lies in, by discouraging any further attempts to work on the problem, simplify and explain the proof, and extract broader insights. (2/3)

there was a moment in time when we had the gasoline and the level of development such that fairly ordinary people could do things like raft the Grand Canyon or climb a mountain in Glacier but not everyone had started to do it yet, so the things you would see were still somewhat wild and free. there was still wildlife around. you could see the stars.

that moment is gone, most people don't know. but we're reading The Monkey Wrench Gang right now and it's making me nostalgic for that brief time.

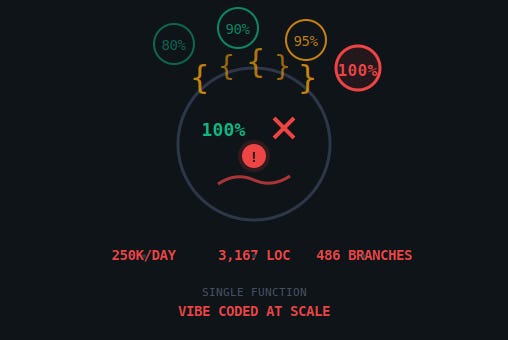

"And here’s the pattern that makes it sustainable, temporarily: when you have billions in revenue and functionally unlimited compute, you feed technical debt with resources instead of fixing it. The function is 3,167 lines? Don’t refactor, add more RAM."

https://techtrenches.dev/p/the-snake-that-ate-itself-what-claude

https://joyofhaskell.com/posts/2017-05-07-do-notation.html