[empty]

- 234 Followers

- 341 Following

- 29.4K Posts

Awww, no hanging to end this episode. I thought that would be a recurring thing. Thanks #TVMysteries gang!

I’ll be hosting SHADOW IN THE CLOUD later tonight on #CultShelf , plus #ActionMatinee and music from Metallica’s Load album on #StewARama this Saturday! Have a great week!

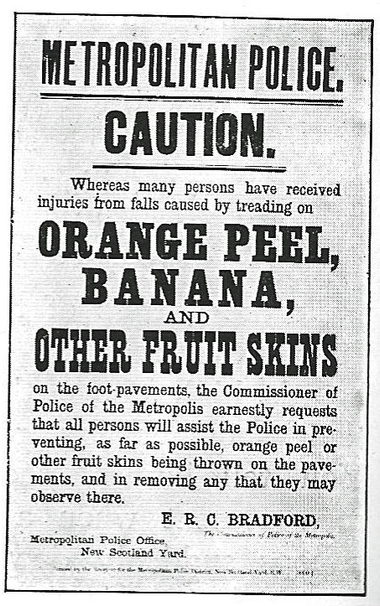

Here is an old poster I found on Wikimedia.

This is a poster from London around 1900.

The description notes "Col. Sir Edward Bradford was Commissioner of the Metropolitan Police from 1890 to 1903 and by all accounts he was an excellent Commissioner and probably the only one with a missing left arm which was lost during an encounter with a Bengal Tigress in India where he was first a soldier and then civil servant. "

Well there we go

Thanks everyone and @SesameSquirrel for hosting!

lolliepop lady was having it off with the deceased

<<turns on machinery made 250 years ago>>

#TVMysteries

#TVMysteries

#TVMysteries

#TVMysteries