Months before it started, it was clear that the #AIActionSummit was going to be a united Arab emirates' regime influence operation on French assets (started before 2021).

Happy to have boycotted every bit of it. Sad for France's sovereignty… but will not weep for it either.

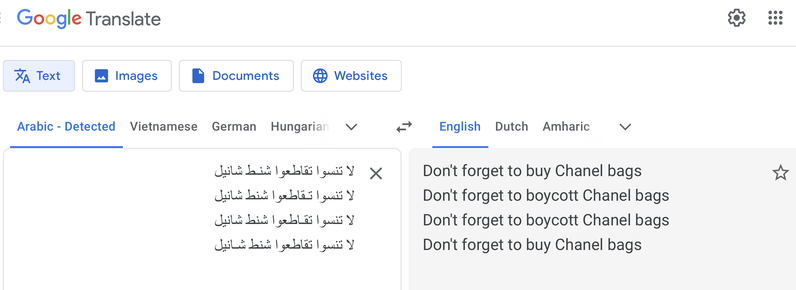

El Mahdi El Mhamdi (@L_badikho) on X

Months before it started, it was clear that the #AIActionSummit was going to be a united Arab emirates' regime influence operation on French assets (started before 2021). Happy to have boycotted every bit of it. Sad for France's sovereignty… but will not weep for it either.