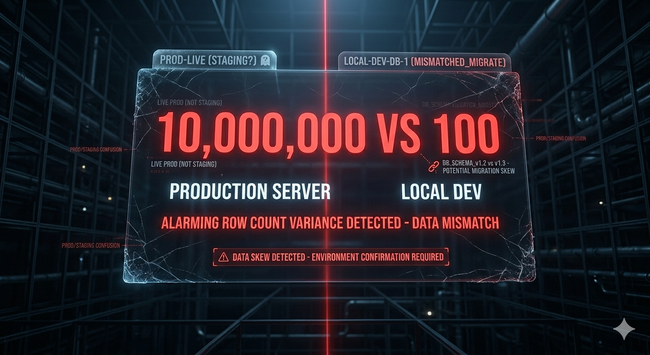

A data review flagged 99.999% row-count variance. The PR was two lines.

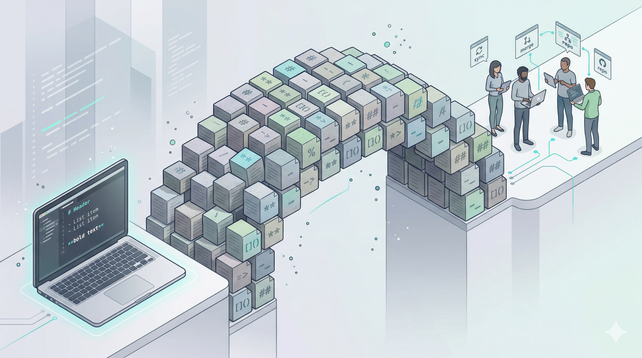

Base was five years of production history. Current was a one-hour CI build. Neither was wrong. They were built for different jobs.

False alarms like this train reviewers to scroll past real variance. That is the damage.

https://blog.reccehq.com/session-base-per-pr-why-data-reviews-lie

#dbt #DataEngineering #AnalyticsEngineering