@davidaugust @audioflyer79 @alisynthesis @blterrible One of the things humans are really, really good at is adapting to tools. (I suspect tool use and invention are more fundamental to our intelligence than pattern matching.)

This is one reason research into human-robot interaction is so challenging - the human will adapt their actions and expectations to the tools after just a handful of uses and won't be able to give good feedback about how hard or difficult it is to use or what change in performance they would expect.

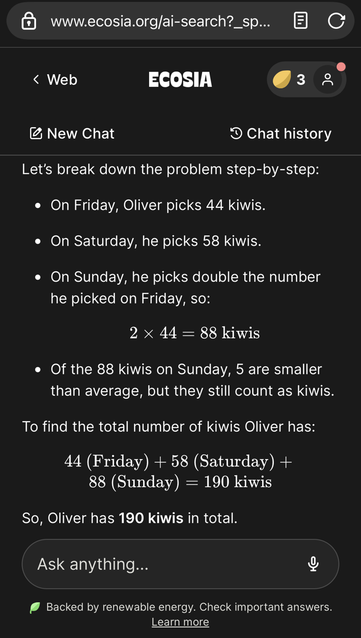

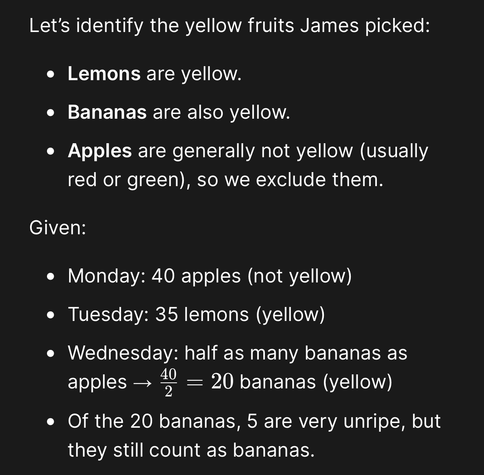

Which means that because we have been trained in this particular form of call and response, the mere fact of the system treating a question like an elementary school math test may predispose the user to assume that they *intended* the model to treat it that way and not realize that their original intention was to gather a different kind of information about the system.

That's one thing that makes the Apple paper particularly nice - they managed to intentionally avoid human built-in post hoc rationalizations and focus on the specific question they wanted answered.